This chapter describes SVG's declarative filter effects feature set, which when combined with the 2D power of SVG can describe much of the common artwork on the Web in such a way that client-side generation and alteration can be performed easily. In addition, the ability to apply filter effects to SVG graphics elements and container elements helps to maintain the semantic structure of the document, instead of resorting to images which aside from generally being a fixed resolution tend to obscure the original semantics of the elements they replace. This is especially true for effects applied to text.

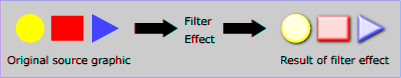

A filter effect consists of a series of graphics operations that are applied to a given source graphic to produce a modified graphical result. The result of the filter effect is rendered to the target device instead of the original source graphic. The following illustrates the process:

View this example as SVG (SVG-enabled browsers only)

Filter effects are defined by 'filter element' elements. To apply a filter effect to a graphics element or a container element, you set the value of the 'filter property' property on the given element such that it references the filter effect.

Each 'filter element' element contains a set of filter primitives as its children. Each filter primitive performs a single fundamental graphical operation (e.g., a blur or a lighting effect) on one or more inputs, producing a graphical result. Because most of the filter primitives represent some form of image processing, in most cases the output from a filter primitive is a single RGBA image.

The original source graphic or the result from a filter primitive can be used as input into one or more other filter primitives. A common application is to use the source graphic multiple times. For example, a simple filter could replace one graphic by two by adding a black copy of original source graphic offset to create a drop shadow. In effect, there are now two layers of graphics, both with the same original source graphics.

When applied to container elements such as 'g', the 'filter property' property applies to the contents of the group as a whole. The group's children do not render to the screen directly; instead, the graphics commands necessary to render the children are stored temporarily. Typically, the graphics commands are executed as part of the processing of the referenced 'filter element' element via use of the keywords SourceGraphic or SourceAlpha. Filter effects can be applied to container elements with no content (e.g., an empty 'g' element), in which case the SourceGraphic or SourceAlpha consist of a transparent black rectangle that is the size of the filter effects region.

Sometimes filter primitives result in undefined pixels. For example, filter primitive 'feOffset' can shift an image down and to the right, leaving undefined pixels at the top and left. In these cases, the undefined pixels are set to transparent black.

The following shows an example of a filter effect.

Example filters01 - introducing filter effects.

The filter effect used in the example above is repeated here with reference numbers in the left column before each of the six filter primitives:

1 2 3 4 5 6 |

<filter id="MyFilter" filterUnits="userSpaceOnUse" x="0" y="0" width="200" height="120">

<desc>Produces a 3D lighting effect.</desc>

<feGaussianBlur in="SourceAlpha" stdDeviation="4" result="blur"/>

<feOffset in="blur" dx="4" dy="4" result="offsetBlur"/>

<feSpecularLighting in="blur" surfaceScale="5" specularConstant=".75"

specularExponent="20" lighting-color="#bbbbbb"

result="specOut">

<fePointLight x="-5000" y="-10000" z="20000"/>

</feSpecularLighting>

<feComposite in="specOut" in2="SourceAlpha" operator="in" result="specOut"/>

<feComposite in="SourceGraphic" in2="specOut" operator="arithmetic"

k1="0" k2="1" k3="1" k4="0" result="litPaint"/>

<feMerge>

<feMergeNode in="offsetBlur"/>

<feMergeNode in="litPaint"/>

</feMerge>

</filter>

|

The following pictures show the intermediate image results from each of the six filter elements:

|

|

|

|

|

|||

|

|

|

|

The description of the 'filter element' element follows:

Attribute definitions:

Properties inherit into the 'filter element' element from its ancestors; properties do not inherit from the element referencing the 'filter element' element.

'filter element' elements are never rendered directly; their only usage is as something that can be referenced using the 'filter property' property. The 'display' property does not apply to the 'filter element' element; thus, 'filter element' elements are not directly rendered even if the 'display' property is set to a value other than none, and 'filter element' elements are available for referencing even when the 'display' property on the 'filter element' element or any of its ancestors is set to none.

The description of the 'filter property' property is as follows:

| Value: | <funciri> | none | inherit |

| Initial: | none |

| Applies to: | container elements (except 'mask element') and graphics elements |

| Inherited: | no |

| Percentages: | N/A |

| Media: | visual |

| Animatable: | yes |

A 'filter element' element can define a region on the canvas to which a given filter effect applies and can provide a resolution for any intermediate continuous tone images used to process any raster-based filter primitives. The 'filter element' element has the following attributes which work together to define the filter effects region:

Defines the coordinate system for attributes 'x', 'y', 'width' and 'height'.

If filterUnits="userSpaceOnUse", 'x', 'y', 'width' and 'height' represent values in the current user coordinate system in place at the time when the 'filter element' is referenced (i.e., the user coordinate system for the element referencing the 'filter element' via a 'filter property' property).

If filterUnits="objectBoundingBox", then 'x', 'y', 'width' and 'height' represent fractions or percentages of the bounding box on the referencing element (see Object bounding box units).

If attribute 'filterUnits' is not specified, then the effect is if a value of 'objectBoundingBox' were specified.

Animatable: yes.

These attributes define a rectangular region on the canvas to which this filter applies.

The amount of memory and processing time required to apply the filter are related to the size of this rectangle and the 'filterRes' attribute of the filter.

The coordinate system for these attributes depends on the value for attribute 'filterUnits'.

Negative values for 'width' or 'height' are an error (see Error processing). Zero values disable rendering of the element which referenced the filter.

The bounds of this rectangle act as a hard clipping region for each filter primitive included with a given 'filter element' element; thus, if the effect of a given filter primitive would extend beyond the bounds of the rectangle (this sometimes happens when using a 'feGaussianBlur' filter primitive with a very large 'feGaussianBlur/stdDeviation'), parts of the effect will get clipped.

If 'x' or 'y' is not specified, the effect is as if a value of -10% were specified.

If 'width' or 'height' is not specified, the effect is as if a value of 120% were specified.

Animatable: yes.

This attribute takes the form x-pixels [y-pixels],

and indicates the width and height of the intermediate images in

pixels. If not provided, then the user agent will use reasonable values

to produce a high-quality result on the output device.

Care should be taken when assigning a non-default value to this attribute. Too small of a value may result in unwanted pixelation in the result. Too large of a value may result in slow processing and large memory usage.

Negative values are an error (see Error processing). Zero values disable rendering of the element which referenced the filter.

Non-integer values are truncated, i.e rounded to the closest integer value towards zero.

Animatable: yes.

Note that both of the two possible value for 'filterUnits' (i.e., 'objectBoundingBox' and 'userSpaceOnUse') result in a filter region whose coordinate system has its X-axis and Y-axis each parallel to the X-axis and Y-axis, respectively, of the user coordinate system for the element to which the filter will be applied.

Sometimes implementers can achieve faster performance when the filter region can be mapped directly to device pixels; thus, for best performance on display devices, it is suggested that authors define their region such that SVG user agent can align the filter region pixel-for-pixel with the background. In particular, for best filter effects performance, avoid rotating or skewing the user coordinate system. Explicit values for attribute 'filterRes' can either help or harm performance. If 'filterRes' is smaller than the automatic (i.e., default) filter resolution, then filter effect might have faster performance (usually at the expense of quality). If 'filterRes' is larger than the automatic (i.e., default) filter resolution, then filter effects performance will usually be slower.

It is often necessary to provide padding space because the filter effect might impact bits slightly outside the tight-fitting bounding box on a given object. For these purposes, it is possible to provide negative percentage values for 'x' and 'y', and percentages values greater than 100% for 'width' and 'height'. This, for example, is why the defaults for the filter effects region are x="-10%" y="-10%" width="120%" height="120%".

Two possible pseudo input images for filter effects are BackgroundImage and BackgroundAlpha, which each represent an image snapshot of the canvas under the filter region at the time that the 'filter element' element is invoked. BackgroundImage represents both the color values and alpha channel of the canvas (i.e., RGBA pixel values), whereas BackgroundAlpha represents only the alpha channel.

Implementations of SVG user agents often will need to maintain supplemental background image buffers in order to support the BackgroundImage and BackgroundAlpha pseudo input images. Sometimes, the background image buffers will contain an in-memory copy of the accumulated painting operations on the current canvas.

Because in-memory image buffers can take up significant system resources, SVG content must explicitly indicate to the SVG user agent that the document needs access to the background image before BackgroundImage and BackgroundAlpha pseudo input images can be used. The property which enables access to the background image is 'enable-background', defined below:

| Value: | accumulate | new [ <x> <y> <width> <height> ] | inherit |

| Initial: | accumulate |

| Applies to: | container elements |

| Inherited: | no |

| Percentages: | N/A |

| Media: | visual |

| Animatable: | no |

'enable-background' is only applicable to container elements and specifies how the SVG user agents manages the accumulation of the background image.

A value of new indicates two things:

A meaning of enable-background: accumulate (the initial/default value) depends on context:

If a filter effect specifies either the BackgroundImage or the BackgroundAlpha pseudo input images and no ancestor container element has a property value of enable-background: new, then the background image request is technically in error. Processing will proceed without interruption (i.e., no error message) and a transparent black image shall be provided in response to the request.

The optional <x>,<y>,<width>,<height> parameters on the new value are <number> values that indicate the subregion of the container element's user space where access to the background image is allowed to happen. These parameters enable the SVG user agent potentially to allocate smaller temporary image buffers than the default values. Thus, the values <x>,<y>,<width>,<height> act as a clipping rectangle on the background image canvas. Negative values for <width> or <height> are an error (see Error processing). If more than zero but less than four of the values <x>,<y>,<width> and <height> are specified or if zero values are specified for <width> or <height>, BackgroundImage and BackgroundAlpha are processed as if background image processing were not enabled.

Assume you have an element E in the document and that E has a series of ancestors A1 (its immediate parent), A2, etc. (Note: A0 is E.) Each ancestor Ai will have a corresponding temporary background image offscreen buffer BUFi. The contents of the background image available to a 'filter element' referenced by E is defined as follows:

Example enable-background-01 illustrates the rules for background image processing.

The example above contains five parts, described as follows:

This section describes the various filter primtives that can be assembled to achieve a particular filter effect.

Unless otherwise stated, all image filters operate on premultiplied RGBA samples. Filters which work more naturally on non-premultiplied data (feColorMatrix and feComponentTransfer) will temporarily undo and redo premultiplication as specified. All raster effect filtering operations take 1 to N input RGBA images, additional attributes as parameters, and produce a single output RGBA image.

The RGBA result from each filter primitive will be clamped into the allowable ranges for colors and opacity values. Thus, for example, the result from a given filter primitive will have any negative color values or opacity values adjusted up to color/opacity of zero.

The color space in which a particular filter primitive performs its operations is determined by the value of property 'color-interpolation-filters' on the given filter primitive. A different property, 'color-interpolation' determines the color space for other color operations. Because these two properties have different initial values ('color-interpolation-filters' has an initial value of linearRGB whereas 'color-interpolation' has an initial value of sRGB), in some cases to achieve certain results (e.g., when coordinating gradient interpolation with a filtering operation) it will be necessary to explicitly set 'color-interpolation' to linearRGB or 'color-interpolation-filters' to sRGB on particular elements. Note that the examples below do not explicitly set either 'color-interpolation' or 'color-interpolation-filters', so the initial values for these properties apply to the examples.

With the exception of the 'feTile/in' attribute, all of the following attributes are available on all filter primitive elements:

Attribute definitions:

The 'feTile/in' attribute is available on all filter primitive elements that require an input.

Animatable: yes.All filter primitives have attributes 'x', 'y', 'width' and 'height' which identify a subregion which restricts calculation and rendering of the given filter primitive. These attributes are defined according to the same rules as other filter primitives' coordinate and length attributes and thus represent values in the coordinate system established by attribute 'filter/primitiveUnits' on the 'filter element' element.

'x', 'y', 'width' and 'height' default to the union (i.e., tightest fitting bounding box) of the subregions defined for all referenced nodes. If there are no referenced nodes (e.g., for 'feImage' or 'feTurbulence'), or one or more of the referenced nodes is a standard input (one of SourceGraphic, SourceAlpha, BackgroundImage, BackgroundAlpha, FillPaint or StrokePaint), or for 'feTile' (which is special because its principal function is to replicate the referenced node in X and Y and thereby produce a usually larger result), the default subregion is 0%,0%,100%,100%, where as a special-case the percentages are relative to the dimensions of the filter region, thus making the the default filter primitive subregion equal to the filter region.

'x', 'y', 'width' and 'height' act as a hard clip clipping rectangle on both the filter primitive's input image(s) and the filter primitive result.

All intermediate offscreens are defined to not exceed the intersection of 'x', 'y', 'width' and 'height' with the filter region. The filter region and any of the 'x', 'y', 'width' and 'height' subregions are to be set up such that all offscreens are made big enough to accommodate any pixels which even partly intersect with either the filter region or the x,y,width,height subregions.

'feTile' references a previous filter primitive and then stitches the tiles together based on the 'x', 'y', 'width' and 'height' values of the referenced filter primitive in order to fill its own filter primitive subregion.

Example primitive-subregion-01 demonstrates the effect of specifying a filter primitive subregion:

In the example above there are three rects that each have a cross and a circle in them. The circle element in each one has a different filter applied, but with the same filter primitive subregion. The filter output should be limited to the filter primitive subregion, so you should never see the circles themselves, just the rects that make up the filter primitive subregion.

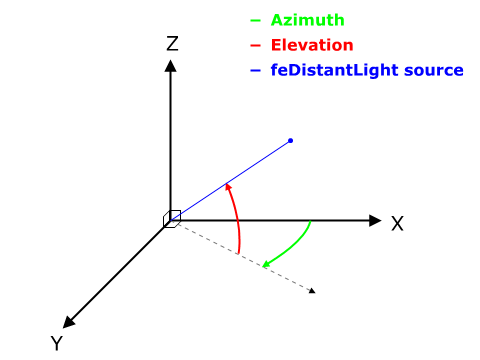

The following sections define the elements that define a light source, 'feDistantLight', 'fePointLight' and 'feSpotLight', and property 'lighting-color', which defines the color of the light.

Attribute definitions:

The following diagram illustrates the angles which 'azimuth' and 'elevation' represent in an XYZ coordinate system.

Attribute definitions:

Attribute definitions:

The 'lighting-color' property defines the color of the light source for filter primitives 'feDiffuseLighting' and 'feSpecularLighting'.

| Value: | currentColor | <color> [<icccolor>] | inherit |

| Initial: | white |

| Applies to: | 'feDiffuseLighting' and 'feSpecularLighting' elements |

| Inherited: | no |

| Percentages: | N/A |

| Media: | visual |

| Animatable: | yes |

This filter composites two objects together using commonly used imaging software blending modes. It performs a pixel-wise combination of two input images.

Attribute definitions:

For all feBlend modes, the result opacity is computed as follows:

qr = 1 - (1-qa)*(1-qb)

For the compositing formulas below, the following definitions apply:

cr = Result color (RGB) - premultiplied qa = Opacity value at a given pixel for image A qb = Opacity value at a given pixel for image B ca = Color (RGB) at a given pixel for image A - premultiplied cb = Color (RGB) at a given pixel for image B - premultiplied

The following table provides the list of available image blending modes:

| Image Blending Mode | Formula for computing result color |

| normal | cr = (1 - qa) * cb + ca |

| multiply | cr = (1-qa)*cb + (1-qb)*ca + ca*cb |

| screen | cr = cb + ca - ca * cb |

| darken | cr = Min ((1 - qa) * cb + ca, (1 - qb) * ca + cb) |

| lighten | cr = Max ((1 - qa) * cb + ca, (1 - qb) * ca + cb) |

'normal' blend mode is equivalent to operator="over" on the 'feComposite' filter primitive, matches the blending method used by 'feMerge' and matches the simple alpha compositing technique used in SVG for all compositing outside of filter effects.

Example feBlend shows examples of the five blend modes.

This filter applies a matrix transformation:

| R' | | a00 a01 a02 a03 a04 | | R | | G' | | a10 a11 a12 a13 a14 | | G | | B' | = | a20 a21 a22 a23 a24 | * | B | | A' | | a30 a31 a32 a33 a34 | | A | | 1 | | 0 0 0 0 1 | | 1 |

on the RGBA color and alpha values of every pixel on the input graphics to produce a result with a new set of RGBA color and alpha values.

The calculations are performed on non-premultiplied color values. If the input graphics consists of premultiplied color values, those values are automatically converted into non-premultiplied color values for this operation.

These matrices often perform an identity mapping in the alpha channel. If that is the case, an implementation can avoid the costly undoing and redoing of the premultiplication for all pixels with A = 1.

Attribute definitions:

type="matrix" values="1 0 0 0 0 0 1 0 0 0 0 0 1 0 0 0 0 0 1 0"

| R' | |0.213+0.787s 0.715-0.715s 0.072-0.072s 0 0 | | R | | G' | |0.213-0.213s 0.715+0.285s 0.072-0.072s 0 0 | | G | | B' | = |0.213-0.213s 0.715-0.715s 0.072+0.928s 0 0 | * | B | | A' | | 0 0 0 1 0 | | A | | 1 | | 0 0 0 0 1 | | 1 |

| R' | | a00 a01 a02 0 0 | | R | | G' | | a10 a11 a12 0 0 | | G | | B' | = | a20 a21 a22 0 0 | * | B | | A' | | 0 0 0 1 0 | | A | | 1 | | 0 0 0 0 1 | | 1 |where the terms a00, a01, etc. are calculated as follows:

| a00 a01 a02 | [+0.213 +0.715 +0.072]

| a10 a11 a12 | = [+0.213 +0.715 +0.072] +

| a20 a21 a22 | [+0.213 +0.715 +0.072]

[+0.787 -0.715 -0.072]

cos(hueRotate value) * [-0.213 +0.285 -0.072] +

[-0.213 -0.715 +0.928]

[-0.213 -0.715+0.928]

sin(hueRotate value) * [+0.143 +0.140-0.283]

[-0.787 +0.715+0.072]

Thus, the upper left term of the hue matrix turns out

to be:

.213 + cos(hueRotate value)*.787 - sin(hueRotate value)*.213

| R' | | 0 0 0 0 0 | | R | | G' | | 0 0 0 0 0 | | G | | B' | = | 0 0 0 0 0 | * | B | | A' | | 0.2125 0.7154 0.0721 0 0 | | A | | 1 | | 0 0 0 0 1 | | 1 |

Example feColorMatrix shows examples of the four types of feColorMatrix operations.

This filter primitive performs component-wise remapping of data as follows:

R' = feFuncR( R ) G' = feFuncG( G ) B' = feFuncB( B ) A' = feFuncA( A )

for every pixel. It allows operations like brightness adjustment, contrast adjustment, color balance or thresholding.

The calculations are performed on non-premultiplied color values. If the input graphics consists of premultiplied color values, those values are automatically converted into non-premultiplied color values for this operation. (Note that the undoing and redoing of the premultiplication can be avoided if feFuncA is the identity transform and all alpha values on the source graphic are set to 1.)

The child elements of a 'feComponentTransfer' element specify the transfer functions for the four channels:

The following rules apply to the processing of the 'feComponentTransfer' element:

The attributes below are the transfer function element attributes, which apply to sub-elements 'feFuncR', 'feFuncG', 'feFuncB' and 'feFuncA' that define the transfer functions.

Attribute definitions:

Indicates the type of component transfer function. The type of function determines the applicability of the other attributes.

In the following, C is the initial component (e.g., 'feFuncR'), C' is the remapped component; both in the closed interval [0,1].

C' = C

For a value C < 1 find k

such that:

k/n <= C < (k+1)/n

The result C' is given by:

C' = vk + (C - k/n)*n * (vk+1 - vk)

If C = 1 then:

C' = vn.

For a value C < 1 find k

such that:

k/n <= C < (k+1)/n

The result C' is given by:

C' = vk

If C = 1 then:

C' = vn-1.

Example feComponentTransfer shows examples of the four types of feComponentTransfer operations.

This filter performs the combination of the two input images pixel-wise in image space using one of the Porter-Duff [PORTERDUFF] compositing operations: over, in, atop, out, xor [SVG-COMPOSITING]. Additionally, a component-wise arithmetic operation (with the result clamped between [0..1]) can be applied.

The arithmetic operation is useful for combining the output from the 'feDiffuseLighting' and 'feSpecularLighting' filters with texture data. It is also useful for implementing dissolve. If the arithmetic operation is chosen, each result pixel is computed using the following formula:

result = k1*i1*i2 + k2*i1 + k3*i2 + k4where:

i1 and i2 indicate the corresponding pixel channel values of the input image, which map to in and in2 respectively

k1, k2, k3 and k4 indicate the values of the attributes with the same name

For this filter primitive, the extent of the resulting image might grow as described in the section that describes the filter primitive subregion.

Attribute definitions:

Example feComposite shows examples of the six types of feComposite operations. It also shows two different techniques to using the BackgroundImage as part of the compositing operation.

The first two rows render bluish triangles into the background. A filter is applied which composites reddish triangles into the bluish triangles using one of the compositing operations. The result from compositing is drawn onto an opaque white temporary surface, and then that result is written to the canvas. (The opaque white temporary surface obliterates the original bluish triangle.)

The last two rows apply the same compositing operations of reddish triangles into bluish triangles. However, the compositing result is directly blended into the canvas (the opaque white temporary surface technique is not used). In some cases, the results are different than when a temporary opaque white surface is used. The original bluish triangle from the background shines through wherever the compositing operation results in completely transparent pixel. In other cases, the result from compositing is blended into the bluish triangle, resulting in a different final color value.

feConvolveMatrix applies a matrix convolution filter effect. A convolution combines pixels in the input image with neighboring pixels to produce a resulting image. A wide variety of imaging operations can be achieved through convolutions, including blurring, edge detection, sharpening, embossing and beveling.

A matrix convolution is based on an n-by-m matrix (the convolution kernel) which describes how a given pixel value in the input image is combined with its neighboring pixel values to produce a resulting pixel value. Each result pixel is determined by applying the kernel matrix to the corresponding source pixel and its neighboring pixels. The basic convolution formula which is applied to each color value for a given pixel is:

COLORX,Y = (

SUM

I=0 to [orderY-1] {

SUM

J=0 to [orderX-1] {

SOURCE

X-targetX+J, Y-targetY+I *

kernelMatrixorderX-J-1,

orderY-I-1

}

}

) /

divisor +

bias * ALPHAX,Y

where "orderX" and "orderY" represent the X and Y values for the 'order' attribute, "targetX" represents the value of the 'targetX' attribute, "targetY" represents the value of the 'targetY' attribute, "kernelMatrix" represents the value of the 'kernelMatrix' attribute, "divisor" represents the value of the 'divisor' attribute, and "bias" represents the value of the 'bias' attribute.

Note in the above formulas that the values in the kernel matrix are applied such that the kernel matrix is rotated 180 degrees relative to the source and destination images in order to match convolution theory as described in many computer graphics textbooks.

To illustrate, suppose you have a input image which is 5 pixels by 5 pixels, whose color values for one of the color channels are as follows:

0 20 40 235 235

100 120 140 235 235

200 220 240 235 235

225 225 255 255 255

225 225 255 255 255

and you define a 3-by-3 convolution kernel as follows:

1 2 3 4 5 6 7 8 9

Let's focus on the color value at the second row and second column of the image (source pixel value is 120). Assuming the simplest case (where the input image's pixel grid aligns perfectly with the kernel's pixel grid) and assuming default values for attributes 'divisor', 'targetX' and 'targetY', then resulting color value will be:

(9* 0 + 8* 20 + 7* 40 + 6*100 + 5*120 + 4*140 + 3*200 + 2*220 + 1*240) / (9+8+7+6+5+4+3+2+1)

Because they operate on pixels, matrix convolutions are inherently resolution-dependent. To make 'feConvolveMatrix' produce resolution-independent results, an explicit value should be provided for either the 'filter/filterRes' attribute on the 'filter element' element and/or attribute 'kernelUnitLength'.

'kernelUnitLength', in combination with the other attributes, defines an implicit pixel grid in the filter effects coordinate system (i.e., the coordinate system established by the 'filter/primitiveUnits' attribute). If the pixel grid established by 'kernelUnitLength' is not scaled to match the pixel grid established by attribute 'filter/filterRes' (implicitly or explicitly), then the input image will be temporarily rescaled to match its pixels with 'kernelUnitLength'. The convolution happens on the resampled image. After applying the convolution, the image is resampled back to the original resolution.

When the image must be resampled to match the coordinate system defined by 'kernelUnitLength' prior to convolution, or resampled to match the device coordinate system after convolution, it is recommended that high quality viewers make use of appropriate interpolation techniques, for example bilinear or bicubic. Depending on the speed of the available interpolents, this choice may be affected by the 'image-rendering' property setting. Note that implementations might choose approaches that minimize or eliminate resampling when not necessary to produce proper results, such as when the document is zoomed out such that 'kernelUnitLength' is considerably smaller than a device pixel.

Attribute definitions:

Determines how to extend the input image as necessary with color values so that the matrix operations can be applied when the kernel is positioned at or near the edge of the input image.

"duplicate" indicates that the input image is extended along each of its borders as necessary by duplicating the color values at the given edge of the input image.

Original N-by-M image, where m=M-1 and n=N-1:

11 12 ... 1m 1M

21 22 ... 2m 2M

.. .. ... .. ..

n1 n2 ... nm nM

N1 N2 ... Nm NM

Extended by two pixels using "duplicate":

11 11 11 12 ... 1m 1M 1M 1M

11 11 11 12 ... 1m 1M 1M 1M

11 11 11 12 ... 1m 1M 1M 1M

21 21 21 22 ... 2m 2M 2M 2M

.. .. .. .. ... .. .. .. ..

n1 n1 n1 n2 ... nm nM nM nM

N1 N1 N1 N2 ... Nm NM NM NM

N1 N1 N1 N2 ... Nm NM NM NM

N1 N1 N1 N2 ... Nm NM NM NM

"wrap" indicates that the input image is extended by taking the color values from the opposite edge of the image.

Extended by two pixels using "wrap": nm nM n1 n2 ... nm nM n1 n2 Nm NM N1 N2 ... Nm NM N1 N2 1m 1M 11 12 ... 1m 1M 11 12 2m 2M 21 22 ... 2m 2M 21 22 .. .. .. .. ... .. .. .. .. nm nM n1 n2 ... nm nM n1 n2 Nm NM N1 N2 ... Nm NM N1 N2 1m 1M 11 12 ... 1m 1M 11 12 2m 2M 21 22 ... 2m 2M 21 22

"none" indicates that the input image is extended with pixel values of zero for R, G, B and A.

If attribute 'edgeMode' is not specified, then the effect is as if a value of duplicate were specified.

Animatable: yes.

ALPHAX,Y

of the convolution formula for a given pixel is:

ALPHAX,Y = (

SUM

I=0 to [orderY-1] {

SUM

J=0 to [orderX-1] {

SOURCE

X-targetX+J, Y-targetY+I *

kernelMatrixorderX-J-1,

orderY-I-1

}

}

) /

divisor +

bias

ALPHAX,Y

of the convolution formula for a given pixel is:

ALPHAX,Y = SOURCEX,Y

This filter primitive lights an image using the alpha channel as a bump map. The resulting image is an RGBA opaque image based on the light color with alpha = 1.0 everywhere. The lighting calculation follows the standard diffuse component of the Phong lighting model. The resulting image depends on the light color, light position and surface geometry of the input bump map.

The light map produced by this filter primitive can be combined with a texture image using the multiply term of the arithmetic 'feComposite' compositing method. Multiple light sources can be simulated by adding several of these light maps together before applying it to the texture image.

The formulas below make use of 3x3 filters. Because they operate on pixels, such filters are inherently resolution-dependent. To make 'feDiffuseLighting' produce resolution-independent results, an explicit value should be provided for either the 'filter/filterRes' attribute on the 'filter element' element and/or attribute 'kernelUnitLength'.

'kernelUnitLength', in combination with the other attributes, defines an implicit pixel grid in the filter effects coordinate system (i.e., the coordinate system established by the 'filter/primitiveUnits' attribute). If the pixel grid established by 'kernelUnitLength' is not scaled to match the pixel grid established by attribute 'filter/filterRes' (implicitly or explicitly), then the input image will be temporarily rescaled to match its pixels with 'kernelUnitLength'. The 3x3 filters are applied to the resampled image. After applying the filter, the image is resampled back to its original resolution.

When the image must be resampled, it is recommended that high quality viewers make use of appropriate interpolation techniques, for example bilinear or bicubic. Depending on the speed of the available interpolents, this choice may be affected by the 'image-rendering' property setting. Note that implementations might choose approaches that minimize or eliminate resampling when not necessary to produce proper results, such as when the document is zoomed out such that 'kernelUnitLength' is considerably smaller than a device pixel.

For the formulas that follow, the

Norm(Ax,Ay,Az)

function is defined as:

Norm(Ax,Ay,Az) = sqrt(Ax^2+Ay^2+Az^2)

The resulting RGBA image is computed as follows:

Dr = kd * N.L *

Lr

Dg = kd * N.L * Lg

Db = kd * N.L * Lb

Da = 1.0

where

N is a function of x and y and depends on the surface gradient as follows:

The surface described by the input alpha image I(x,y) is:

Z (x,y) = surfaceScale * I(x,y)

Surface normal is calculated using the Sobel gradient 3x3 filter. Different filter kernels are used depending on whether the given pixel is on the interior or an edge. For each case, the formula is:

Nx (x,y)= - surfaceScale *

FACTORx *

(Kx(0,0)*I(x-dx,y-dy) +

Kx(1,0)*I(x,y-dy) +

Kx(2,0)*I(x+dx,y-dy) +

Kx(0,1)*I(x-dx,y) +

Kx(1,1)*I(x,y) +

Kx(2,1)*I(x+dx,y) +

Kx(0,2)*I(x-dx,y+dy) +

Kx(1,2)*I(x,y+dy) +

Kx(2,2)*I(x+dx,y+dy))

Ny (x,y)= - surfaceScale * FACTORy

*

(Ky(0,0)*I(x-dx,y-dy) +

Ky(1,0)*I(x,y-dy) +

Ky(2,0)*I(x+dx,y-dy) +

Ky(0,1)*I(x-dx,y) +

Ky(1,1)*I(x,y) +

Ky(2,1)*I(x+dx,y) +

Ky(0,2)*I(x-dx,y+dy) +

Ky(1,2)*I(x,y+dy) +

Ky(2,2)*I(x+dx,y+dy))

Nz (x,y) = 1.0

N = (Nx, Ny, Nz) /

Norm((Nx,Ny,Nz))

In these formulas, the dx and dy

values (e.g., I(x-dx,y-dy)), represent deltas

relative to a given (x,y) position for the purpose

of estimating the slope of the surface at that point. These

deltas are determined by the value (explicit or implicit) of

attribute 'kernelUnitLength'.

|

Top/left corner:

FACTORx=2/(3*dx) |

Top row:

FACTORx=1/(3*dx) |

Top/right corner:

FACTORx=2/(3*dx) |

|

Left column:

FACTORx=1/(2*dx) |

Interior pixels:

FACTORx=1/(4*dx) |

Right column:

FACTORx=1/(2*dx) |

|

Bottom/left corner:

FACTORx=2/(3*dx) |

Bottom row:

FACTORx=1/(3*dx) |

Bottom/right corner:

FACTORx=2/(3*dx) |

L, the unit vector from the image sample to the light, is calculated as follows:

For Infinite light sources it is constant:

Lx =

cos(azimuth)*cos(elevation)

Ly = sin(azimuth)*cos(elevation)

Lz = sin(elevation)

For Point and spot lights it is a function of position:

Lx = Lightx -

x

Ly = Lighty - y

Lz = Lightz - Z(x,y)

L = (Lx, Ly, Lz) /

Norm(Lx, Ly, Lz)

where Lightx, Lighty, and Lightz are the input light position.

Lr,Lg,Lb, the light color vector, is a function of position in the spot light case only:

Lr =

Lightr*pow((-L.S),specularExponent)

Lg =

Lightg*pow((-L.S),specularExponent)

Lb =

Lightb*pow((-L.S),specularExponent)

where S is the unit vector pointing from the light to the point (pointsAtX, pointsAtY, pointsAtZ) in the x-y plane:

Sx = pointsAtX -

Lightx

Sy = pointsAtY - Lighty

Sz = pointsAtZ - Lightz

S = (Sx, Sy, Sz) /

Norm(Sx, Sy, Sz)

If L.S is positive, no light is present. (Lr = Lg = Lb = 0). If 'feSpotLight/limitingConeAngle' is specified, -L.S < cos(limitingConeAngle) also indicates that no light is present.

Attribute definitions:

dx and dy, respectively, in the

surface

normal calculation formulas. By specifying value(s) for

'kernelUnitLength', the kernel

becomes defined in a scalable, abstract coordinate system.

If 'kernelUnitLength' is not

specified, the dx and dy values

should represent very small deltas relative to a given

(x,y) position, which might be implemented in

some cases as one pixel in the intermediate image offscreen

bitmap, which is a pixel-based coordinate system, and thus

potentially not scalable. For some level of consistency

across display media and user agents, it is necessary that

a value be provided for at least one of 'filter/filterRes' and 'kernelUnitLength'. Discussion of

intermediate images are in the Introduction and in

the description of attribute 'filter/filterRes'.The light source is defined by one of the child elements 'feDistantLight', 'fePointLight' or 'feSpotLight'. The light color is specified by property 'lighting-color'.

This filter primitive uses the pixels values from the image from 'in2' to spatially displace the image from 'in'. This is the transformation to be performed:

P'(x,y) <- P( x + scale * (XC(x,y) - .5), y + scale * (YC(x,y) - .5))

where P(x,y) is the input image, 'in', and P'(x,y) is the destination. XC(x,y) and YC(x,y) are the component values of the channel designated by the xChannelSelector and yChannelSelector. For example, to use the R component of 'in2' to control displacement in x and the G component of Image2 to control displacement in y, set xChannelSelector to "R" and yChannelSelector to "G".

The displacement map defines the inverse of the mapping performed.

The input image in is to remain premultiplied for this filter primitive. The calculations using the pixel values from 'in2' are performed using non-premultiplied color values. If the image from 'in2' consists of premultiplied color values, those values are automatically converted into non-premultiplied color values before performing this operation.

This filter can have arbitrary non-localized effect on the input which might require substantial buffering in the processing pipeline. However with this formulation, any intermediate buffering needs can be determined by scale which represents the maximum range of displacement in either x or y.

When applying this filter, the source pixel location will often lie between several source pixels. In this case it is recommended that high quality viewers apply an interpolent on the surrounding pixels, for example bilinear or bicubic, rather than simply selecting the nearest source pixel. Depending on the speed of the available interpolents, this choice may be affected by the 'image-rendering' property setting.

The 'color-interpolation-filters' property only applies to the 'in2' source image and does not apply to the 'in' source image. The 'in' source image must remain in its current color space.

Attribute definitions:

This filter primitive creates a rectangle filled with the color and opacity values from properties 'flood-color' and 'flood-opacity'. The rectangle is as large as the filter primitive subregion established by the 'x', 'y', 'width' and 'height' attributes on the 'feFlood' element.

The 'flood-color' property indicates what color to use to flood the current filter primitive subregion. The keyword currentColor and ICC colors can be specified in the same manner as within a <paint> specification for the 'fill' and 'stroke' properties.

| Value: | currentColor | <color> [<icccolor>] | inherit |

| Initial: | black |

| Applies to: | 'feFlood' elements |

| Inherited: | no |

| Percentages: | N/A |

| Media: | visual |

| Animatable: | yes |

The 'flood-opacity' property defines the opacity value to use across the entire filter primitive subregion.

| Value: | <opacity-value> | inherit |

| Initial: | 1 |

| Applies to: | 'feFlood' elements |

| Inherited: | no |

| Percentages: | N/A |

| Media: | visual |

| Animatable: | yes |

This filter primitive performs a Gaussian blur on the input image.

The Gaussian blur kernel is an approximation of the normalized convolution:

G(x,y) = H(x)I(y)

where

H(x) = exp(-x2/ (2s2)) / sqrt(2* pi*s2)

and

I(y) = exp(-y2/ (2t2)) / sqrt(2* pi*t2)

with 's' being the standard deviation in the x direction and 't' being the standard deviation in the y direction, as specified by 'stdDeviation'.

The value of 'stdDeviation' can be either one or two numbers. If two numbers are provided, the first number represents a standard deviation value along the x-axis of the current coordinate system and the second value represents a standard deviation in Y. If one number is provided, then that value is used for both X and Y.

Even if only one value is provided for 'stdDeviation', this can be implemented as a separable convolution.

For larger values of 's' (s >= 2.0), an approximation can be used: Three successive box-blurs build a piece-wise quadratic convolution kernel, which approximates the Gaussian kernel to within roughly 3%.

let d = floor(s * 3*sqrt(2*pi)/4 + 0.5)

... if d is odd, use three box-blurs of size 'd', centered on the output pixel.

... if d is even, two box-blurs of size 'd' (the first one centered on the pixel boundary between the output pixel and the one to the left, the second one centered on the pixel boundary between the output pixel and the one to the right) and one box blur of size 'd+1' centered on the output pixel.

Note: the approximation formula also applies correspondingly to 't'.

Frequently this operation will take place on alpha-only images, such as that produced by the built-in input, SourceAlpha. The implementation may notice this and optimize the single channel case. If the input has infinite extent and is constant (e.g FillPaint where the fill is a solid color), this operation has no effect. If the input has infinite extent and the filter result is the input to an 'feTile', the filter is evaluated with periodic boundary conditions.

Attribute definitions:

The example at the start of this chapter makes use of the 'feGaussianBlur' filter primitive to create a drop shadow effect.

This filter primitive refers to a graphic external to this filter element, which is loaded or rendered into an RGBA raster and becomes the result of the filter primitive.

This filter primitive can refer to an external image or can be a reference to another piece of SVG. It produces an image similar to the built-in image source SourceGraphic except that the graphic comes from an external source.

If the 'xlink:href' references a stand-alone image resource such as a JPEG, PNG or SVG file, then the image resource is rendered according to the behavior of the 'image' element; otherwise, the referenced resource is rendered according to the behavior of the 'use' element. In either case, the current user coordinate system depends on the value of attribute 'filter/primitiveUnits' on the 'filter element' element. The processing of the 'preserveAspectRatio' attribute on the 'feImage' element is identical to that of the 'image' element.

When the referenced image must be resampled to match the device coordinate system, it is recommended that high quality viewers make use of appropriate interpolation techniques, for example bilinear or bicubic. Depending on the speed of the available interpolents, this choice may be affected by the 'image-rendering' property setting.

Attribute definitions:

An IRI reference to the image source.

Animatable: yes.

If attribute 'preserveAspectRatio' is not specified, then the effect is as if a value of xMidYMid meet were specified.

Animatable: yes.

Example feImage illustrates how images are placed relative to an object. From left to right:

This filter primitive composites input image layers on top of each other using the over operator with Input1 (corresponding to the first 'feMergeNode' child element) on the bottom and the last specified input, InputN (corresponding to the last 'feMergeNode' child element), on top.

Many effects produce a number of intermediate layers in order to create the final output image. This filter allows us to collapse those into a single image. Although this could be done by using n-1 Composite-filters, it is more convenient to have this common operation available in this form, and offers the implementation some additional flexibility.

Each 'feMerge' element can have any number of 'feMergeNode' subelements, each of which has an 'feMergeNode/in' attribute.

The canonical implementation of feMerge is to render the entire effect into one RGBA layer, and then render the resulting layer on the output device. In certain cases (in particular if the output device itself is a continuous tone device), and since merging is associative, it might be a sufficient approximation to evaluate the effect one layer at a time and render each layer individually onto the output device bottom to top.

If the topmost image input is SourceGraphic and this 'feMerge' is the last filter primitive in the filter, the implementation is encouraged to render the layers up to that point, and then render the SourceGraphic directly from its vector description on top.

The example at the start of this chapter makes use of the 'feMerge' filter primitive to composite two intermediate filter results together.

This filter primitive performs "fattening" or "thinning" of artwork. It is particularly useful for fattening or thinning an alpha channel.

The dilation (or erosion) kernel is a rectangle with a width of 2*x-radius and a height of 2*y-radius. In dilation, the output pixel is the individual component-wise maximum of the corresponding R,G,B,A values in the input image's kernel rectangle. In erosion, the output pixel is the individual component-wise minimum of the corresponding R,G,B,A values in the input image's kernel rectangle.

Frequently this operation will take place on alpha-only images, such as that produced by the built-in input, SourceAlpha. In that case, the implementation might want to optimize the single channel case.

If the input has infinite extent and is constant (e.g FillPaint where the fill is a solid color), this operation has no effect. If the input has infinite extent and the filter result is the input to an 'feTile', the filter is evaluated with periodic boundary conditions.

Because 'feMorphology' operates on premultipied color values, it will always result in color values less than or equal to the alpha channel.

Attribute definitions:

Example feMorphology shows examples of the four types of feMorphology operations.

This filter primitive offsets the input image relative to its current position in the image space by the specified vector.

This is important for effects like drop shadows.

When applying this filter, the destination location may be offset by a fraction of a pixel in device space. In this case a high quality viewer should make use of appropriate interpolation techniques, for example bilinear or bicubic. This is especially recommended for dynamic viewers where this interpolation provides visually smoother movement of images. For static viewers this is less of a concern. Close attention should be made to the 'image-rendering' property setting to determine the authors intent.

Attribute definitions:

The example at the start of this chapter makes use of the 'feOffset' filter primitive to offset the drop shadow from the original source graphic.

This filter primitive lights a source graphic using the alpha channel as a bump map. The resulting image is an RGBA image based on the light color. The lighting calculation follows the standard specular component of the Phong lighting model. The resulting image depends on the light color, light position and surface geometry of the input bump map. The result of the lighting calculation is added. The filter primitive assumes that the viewer is at infinity in the z direction (i.e., the unit vector in the eye direction is (0,0,1) everywhere).

This filter primitive produces an image which contains the specular reflection part of the lighting calculation. Such a map is intended to be combined with a texture using the add term of the arithmetic 'feComposite' method. Multiple light sources can be simulated by adding several of these light maps before applying it to the texture image.

The resulting RGBA image is computed as follows:

Sr = ks *

pow(N.H, specularExponent) * Lr

Sg = ks * pow(N.H,

specularExponent) * Lg

Sb = ks * pow(N.H,

specularExponent) * Lb

Sa = max(Sr, Sg,

Sb)

where

See 'feDiffuseLighting' for definition of N and (Lr, Lg, Lb).

The definition of H reflects our assumption of the constant eye vector E = (0,0,1):

H = (L + E) / Norm(L+E)

where L is the light unit vector.

Unlike the 'feDiffuseLighting', the 'feSpecularLighting' filter produces a non-opaque image. This is due to the fact that the specular result (Sr,Sg,Sb,Sa) is meant to be added to the textured image. The alpha channel of the result is the max of the color components, so that where the specular light is zero, no additional coverage is added to the image and a fully white highlight will add opacity.

The 'feDiffuseLighting' and 'feSpecularLighting' filters will often be applied together. An implementation may detect this and calculate both maps in one pass, instead of two.

Attribute definitions:

dx and dy, respectively, in the

surface

normal calculation formulas. By specifying value(s) for

'kernelUnitLength', the kernel

becomes defined in a scalable, abstract coordinate system.

If 'kernelUnitLength' is not

specified, the dx and dy values

should represent very small deltas relative to a given

(x,y) position, which might be implemented in

some cases as one pixel in the intermediate image offscreen

bitmap, which is a pixel-based coordinate system, and thus

potentially not scalable. For some level of consistency

across display media and user agents, it is necessary that

a value be provided for at least one of 'filter/filterRes' and 'kernelUnitLength'. Discussion of

intermediate images are in the Introduction and in

the description of attribute 'filter/filterRes'.The light source is defined by one of the child elements 'feDistantLight', 'fePointLight' or 'feDistantLight'. The light color is specified by property 'lighting-color'.

The example at the start of this chapter makes use of the 'feSpecularLighting' filter primitive to achieve a highly reflective, 3D glowing effect.

This filter primitive fills a target rectangle with a repeated, tiled pattern of an input image. The target rectangle is as large as the filter primitive subregion established by the 'x', 'y', 'width' and 'height' attributes on the 'feTile' element.

Typically, the input image has been defined with its own filter primitive

subregion in order to define a reference tile. 'feTile' replicates the reference

tile in both X and Y to completely fill the target rectangle.

The top/left corner of each given tile is at location

(x+i*width,y+j*height), where (x,y)

represents the top/left of the input image's filter primitive

subregion, width and height represent

the width and height of the input image's filter primitive

subregion, and i and j can be any

integer value. In most cases, the input image will have a

smaller filter primitive subregion than the 'feTile' in order to achieve a

repeated pattern effect.

Implementers must take appropriate measures in constructing the tiled image to avoid artifacts between tiles, particularly in situations where the user to device transform includes shear and/or rotation. Unless care is taken, interpolation can lead to edge pixels in the tile having opacity values lower or higher than expected due to the interaction of painting adjacent tiles which each have partial overlap with particular pixels.

This filter primitive creates an image using the Perlin turbulence function. It allows the synthesis of artificial textures like clouds or marble. For a detailed description the of the Perlin turbulence function, see "Texturing and Modeling", Ebert et al, AP Professional, 1994. The resulting image will fill the entire filter primitive subregion for this filter primitive.

It is possible to create bandwidth-limited noise by synthesizing only one octave.

The C code below shows the exact algorithm used for this filter effect.

For fractalSum, you get a turbFunctionResult that is aimed

at a range of -1 to 1 (the actual result might exceed this

range in some cases). To convert to a color value, use the

formula colorValue = ((turbFunctionResult * 255) + 255) /

2, then clamp to the range 0 to 255.

For turbulence, you get a turbFunctionResult that is aimed

at a range of 0 to 1 (the actual result might exceed this range

in some cases). To convert to a color value, use the formula

colorValue = (turbFunctionResult * 255), then

clamp to the range 0 to 255.

The following order is used for applying the pseudo random numbers. An initial seed value is computed based on attribute 'seed'. Then the implementation computes the lattice points for R, then continues getting additional pseudo random numbers relative to the last generated pseudo random number and computes the lattice points for G, and so on for B and A.

The generated color and alpha values are in the color space determined by the value of property 'color-interpolation-filters':

/* Produces results in the range [1, 2**31 - 2].

Algorithm is: r = (a * r) mod m

where a = 16807 and m = 2**31 - 1 = 2147483647

See [Park & Miller], CACM vol. 31 no. 10 p. 1195, Oct. 1988

To test: the algorithm should produce the result 1043618065

as the 10,000th generated number if the original seed is 1.

*/

#define RAND_m 2147483647 /* 2**31 - 1 */

#define RAND_a 16807 /* 7**5; primitive root of m */

#define RAND_q 127773 /* m / a */

#define RAND_r 2836 /* m % a */

long setup_seed(long lSeed)

{

if (lSeed <= 0) lSeed = -(lSeed % (RAND_m - 1)) + 1;

if (lSeed > RAND_m - 1) lSeed = RAND_m - 1;

return lSeed;

}

long random(long lSeed)

{

long result;

result = RAND_a * (lSeed % RAND_q) - RAND_r * (lSeed / RAND_q);

if (result <= 0) result += RAND_m;

return result;

}

#define BSize 0x100

#define BM 0xff

#define PerlinN 0x1000

#define NP 12 /* 2^PerlinN */

#define NM 0xfff

static uLatticeSelector[BSize + BSize + 2];

static double fGradient[4][BSize + BSize + 2][2];

struct StitchInfo

{

int nWidth; // How much to subtract to wrap for stitching.

int nHeight;

int nWrapX; // Minimum value to wrap.

int nWrapY;

};

static void init(long lSeed)

{

double s;

int i, j, k;

lSeed = setup_seed(lSeed);

for(k = 0; k < 4; k++)

{

for(i = 0; i < BSize; i++)

{

uLatticeSelector[i] = i;

for (j = 0; j < 2; j++)

fGradient[k][i][j] = (double)(((lSeed = random(lSeed)) % (BSize + BSize)) - BSize) / BSize;

s = double(sqrt(fGradient[k][i][0] * fGradient[k][i][0] + fGradient[k][i][1] * fGradient[k][i][1]));

fGradient[k][i][0] /= s;

fGradient[k][i][1] /= s;

}

}

while(--i)

{

k = uLatticeSelector[i];

uLatticeSelector[i] = uLatticeSelector[j = (lSeed = random(lSeed)) % BSize];

uLatticeSelector[j] = k;

}

for(i = 0; i < BSize + 2; i++)

{

uLatticeSelector[BSize + i] = uLatticeSelector[i];

for(k = 0; k < 4; k++)

for(j = 0; j < 2; j++)

fGradient[k][BSize + i][j] = fGradient[k][i][j];

}

}

#define s_curve(t) ( t * t * (3. - 2. * t) )

#define lerp(t, a, b) ( a + t * (b - a) )

double noise2(int nColorChannel, double vec[2], StitchInfo *pStitchInfo)

{

int bx0, bx1, by0, by1, b00, b10, b01, b11;

double rx0, rx1, ry0, ry1, *q, sx, sy, a, b, t, u, v;

register i, j;

t = vec[0] + PerlinN;

bx0 = (int)t;

bx1 = bx0+1;

rx0 = t - (int)t;

rx1 = rx0 - 1.0f;

t = vec[1] + PerlinN;

by0 = (int)t;

by1 = by0+1;

ry0 = t - (int)t;

ry1 = ry0 - 1.0f;

// If stitching, adjust lattice points accordingly.

if(pStitchInfo != NULL)

{

if(bx0 >= pStitchInfo->nWrapX)

bx0 -= pStitchInfo->nWidth;

if(bx1 >= pStitchInfo->nWrapX)

bx1 -= pStitchInfo->nWidth;

if(by0 >= pStitchInfo->nWrapY)

by0 -= pStitchInfo->nHeight;

if(by1 >= pStitchInfo->nWrapY)

by1 -= pStitchInfo->nHeight;

}

bx0 &= BM;

bx1 &= BM;

by0 &= BM;

by1 &= BM;

i = uLatticeSelector[bx0];

j = uLatticeSelector[bx1];

b00 = uLatticeSelector[i + by0];

b10 = uLatticeSelector[j + by0];

b01 = uLatticeSelector[i + by1];

b11 = uLatticeSelector[j + by1];

sx = double(s_curve(rx0));

sy = double(s_curve(ry0));

q = fGradient[nColorChannel][b00]; u = rx0 * q[0] + ry0 * q[1];

q = fGradient[nColorChannel][b10]; v = rx1 * q[0] + ry0 * q[1];

a = lerp(sx, u, v);

q = fGradient[nColorChannel][b01]; u = rx0 * q[0] + ry1 * q[1];

q = fGradient[nColorChannel][b11]; v = rx1 * q[0] + ry1 * q[1];

b = lerp(sx, u, v);

return lerp(sy, a, b);

}

double turbulence(int nColorChannel, double *point, double fBaseFreqX, double fBaseFreqY,

int nNumOctaves, bool bFractalSum, bool bDoStitching,

double fTileX, double fTileY, double fTileWidth, double fTileHeight)

{

StitchInfo stitch;

StitchInfo *pStitchInfo = NULL; // Not stitching when NULL.

// Adjust the base frequencies if necessary for stitching.

if(bDoStitching)

{

// When stitching tiled turbulence, the frequencies must be adjusted

// so that the tile borders will be continuous.

if(fBaseFreqX != 0.0)

{

double fLoFreq = double(floor(fTileWidth * fBaseFreqX)) / fTileWidth;

double fHiFreq = double(ceil(fTileWidth * fBaseFreqX)) / fTileWidth;

if(fBaseFreqX / fLoFreq < fHiFreq / fBaseFreqX)

fBaseFreqX = fLoFreq;

else

fBaseFreqX = fHiFreq;

}

if(fBaseFreqY != 0.0)

{

double fLoFreq = double(floor(fTileHeight * fBaseFreqY)) / fTileHeight;

double fHiFreq = double(ceil(fTileHeight * fBaseFreqY)) / fTileHeight;

if(fBaseFreqY / fLoFreq < fHiFreq / fBaseFreqY)

fBaseFreqY = fLoFreq;

else

fBaseFreqY = fHiFreq;

}

// Set up initial stitch values.

pStitchInfo = &stitch;

stitch.nWidth = int(fTileWidth * fBaseFreqX + 0.5f);

stitch.nWrapX = fTileX * fBaseFreqX + PerlinN + stitch.nWidth;

stitch.nHeight = int(fTileHeight * fBaseFreqY + 0.5f);

stitch.nWrapY = fTileY * fBaseFreqY + PerlinN + stitch.nHeight;

}

double fSum = 0.0f;

double vec[2];

vec[0] = point[0] * fBaseFreqX;

vec[1] = point[1] * fBaseFreqY;

double ratio = 1;

for(int nOctave = 0; nOctave < nNumOctaves; nOctave++)

{

if(bFractalSum)

fSum += double(noise2(nColorChannel, vec, pStitchInfo) / ratio);

else

fSum += double(fabs(noise2(nColorChannel, vec, pStitchInfo)) / ratio);

vec[0] *= 2;

vec[1] *= 2;

ratio *= 2;

if(pStitchInfo != NULL)

{

// Update stitch values. Subtracting PerlinN before the multiplication and

// adding it afterward simplifies to subtracting it once.

stitch.nWidth *= 2;

stitch.nWrapX = 2 * stitch.nWrapX - PerlinN;

stitch.nHeight *= 2;

stitch.nWrapY = 2 * stitch.nWrapY - PerlinN;

}

}

return fSum;

}

Attribute definitions:

lowFreq=floor(width*frequency)/width and

hiFreq=ceil(width*frequency)/width. If

frequency/lowFreq < hiFreq/frequency then use lowFreq,

else use hiFreq. While generating turbulence values,

generate lattice vectors as normal for Perlin Noise, except

for those lattice points that lie on the right or bottom

edges of the active area (the size of the resulting tile).

In those cases, copy the lattice vector from the opposite

edge of the active area.

If attribute 'stitchTiles' is not specified, then the effect is as if a value of noStitch were specified.

Example feTurbulence shows the effects of various parameter settings for feTurbulence.