3.1 Introduction

The MediaStream interface is used to

represent streams of media data, typically (but not necessarily) of audio and/or video

content, e.g. from a local camera or a remote site. The data from a MediaStream object does not necessarily have a canonical

binary form; for example, it could just be "the video currently coming from the user's

video camera". This allows user agents to manipulate media streams in whatever fashion

is most suitable on the user's platform.

Each MediaStream object can represent zero

or more tracks, in particular audio and video tracks. Tracks can contain multiple

channels of parallel data; for example a single audio track could have nine channels of

audio data to represent a 7.2 surround sound audio track.

Each track represented by a MediaStream

object has a corresponding MediaStreamTrack object.

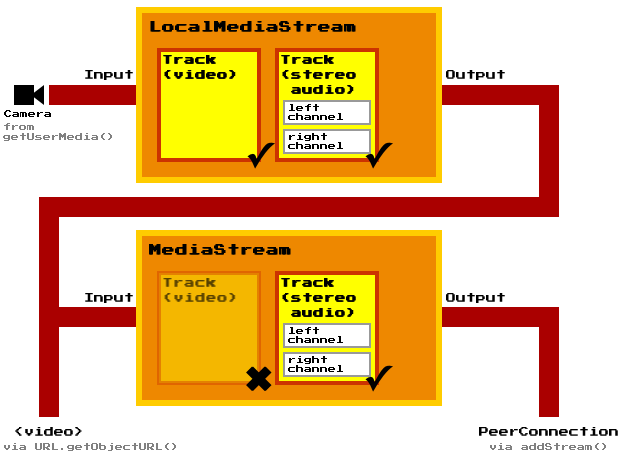

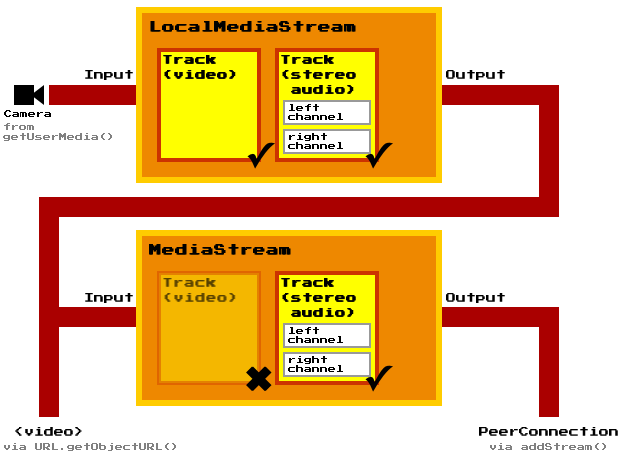

A MediaStream object has an input and an

output. The input depends on how the object was created: a LocalMediaStream object generated by a getUserMedia() call, for instance, might take

its input from the user's local camera, while a MediaStream created by a PeerConnection object will take as input the data received

from a remote peer. The output of the object controls how the object is used, e.g. what

is saved if the object is written to a file, what is displayed if the object is used in

a video element, or indeed what is transmitted to a remote peer if the

object is used with a PeerConnection

object.

Each track in a MediaStream object can be

disabled, meaning that it is muted in the object's output. All tracks are initially

enabled.

A MediaStream can be

finished,

indicating that its inputs have forever stopped providing data. When a MediaStream object is finished, all its tracks are muted

regardless of whether they are enabled or disabled.

The output of a MediaStream object must

correspond to the tracks in its input. Muted audio tracks must be replaced with

silence. Muted video tracks must be replaced with blackness.

A MediaStream object's output can be

"forked" by creating a new MediaStream object

from it using the MediaStream() constructor. The new MediaStream object's input is the output of the object from

which it was created, with any disabled tracks removed, and its output is therefore at

most a subset of that "parent" object. (Merely muted tracks are not removed, so the

tracks do not change when the parent is finished.) When such a fork's parent finishes,

the fork is also said to have finished.

This can be used, for instance, in a video-conferencing scenario to display the

local video from the user's camera and microphone in a local monitor, while only

transmitting the audio to the remote peer (e.g. in response to the user using a "video

mute" feature).

When a track in a MediaStream parent is disabled, any MediaStreamTrack objects corresponding to the tracks in

any MediaStream objects that were created from

parent are disassociated from any track, and must not be reused for

tracks again. If a disabled track in a MediaStream parent is re-enabled, from

the perspective of any MediaStream objects that

were created from parent it is a new track and thus new

MediaStreamTrack objects must be created

for the tracks that correspond to the re-enabled track.

The LocalMediaStream interface is used

when the user agent is generating the stream's data (e.g. from a camera or streaming it

from a local video file). It allows authors to control individual tracks during the

generation of the content, e.g. to allow the user to temporarily disable a local camera

during a video-conference chat.

When a LocalMediaStream object is being

generated from a local file (as opposed to a live audio/video source), the user agent

should stream the data from the file in real time, not all at once. This reduces the

ease with which pages can distinguish live video from pre-recorded video, which can

help protect the user's privacy.

3.2 Interface definitions

3.2.5 BlobCallback

[Callback=FunctionOnly, NoInterfaceObject]

interface BlobCallback {

void handleEvent (Blob blob);

};

3.2.5.1 Methods

handleEvent- Def TBD

| Parameter | Type | Nullable | Optional | Description |

|---|

| blob | Blob | ✘ | ✘ | |

No exceptions.

3.2.6 URL

Note that the following is actually only a partial interface, but ReSpec does

not yet support that.

interface URL {

static DOMString createObjectURL (MediaStream stream);

};

3.2.6.1 Methods

createObjectURL-

Mints a Blob URL to refer to the given MediaStream.

When the createObjectURL() method is called

with a MediaStream argument, the user agent

must return a unique Blob URL for the given MediaStream. [FILE-API]

For audio and video streams, the data exposed on that stream must be in a format

supported by the user agent for use in audio and video

elements.

A Blob URL is the same as what the

File API specification calls a Blob URI, except that anything in the

definition of that feature that refers to File and Blob

objects is hereby extended to also apply to MediaStream and LocalMediaStream objects.

| Parameter | Type | Nullable | Optional | Description |

|---|

| stream | MediaStream | ✘ | ✘ | |

No exceptions.

3.3 Examples

This sample code exposes a button. When clicked, the button is disabled and the

user is prompted to offer a stream. The user can cause the button to be re-enabled by

providing a stream (e.g. giving the page access to the local camera) and then

disabling the stream (e.g. revoking that access).

<input type="button" value="Start" onclick="start()" id="startBtn">

<script>

var startBtn = document.getElementById('startBtn');

function start() {

navigator.getUserMedia('audio,video', gotStream);

startBtn.disabled = true;

}

function gotStream(stream) {

stream.onended = function () {

startBtn.disabled = false;

}

}

</script>

This example allows people to record a short audio message and upload it to the

server. This example even shows rudimentary error handling.

<input type="button" value="⚫" onclick="msgRecord()" id="recBtn">

<input type="button" value="◼" onclick="msgStop()" id="stopBtn" disabled>

<p id="status">To start recording, press the ⚫ button.</p>

<script>

var recBtn = document.getElementById('recBtn');

var stopBtn = document.getElementById('stopBtn');

function report(s) {

document.getElementById('status').textContent = s;

}

function msgRecord() {

report('Attempting to access microphone...');

navigator.getUserMedia('audio', gotStream, noStream);

recBtn.disabled = true;

}

var msgStream, msgStreamRecorder;

function gotStream(stream) {

report('Recording... To stop, press to ◼ button.');

msgStream = stream;

msgStreamRecorder = stream.record();

stopBtn.disabled = false;

stream.onended = function () {

msgStop();

}

}

function msgStop() {

report('Creating file...');

stopBtn.disabled = true;

msgStream.onended = null;

msgStream.stop();

msgStreamRecorder.getRecordedData(msgSave);

}

function msgSave(blob) {

report('Uploading file...');

var x = new XMLHttpRequest();

x.open('POST', 'uploadMessage');

x.send(blob);

x.onload = function () {

report('Done! To record a new message, press the ⚫ button.');

recBtn.disabled = false;

};

x.onerror = function () {

report('Failed to upload message. To try recording a message again, press the ⚫ button.');

recBtn.disabled = false;

};

}

function noStream() {

report('Could not obtain access to your microphone. To try again, press the ⚫ button.');

recBtn.disabled = false;

}

</script>

This example allows people to take photos of themselves from the local video

camera.

<article>

<style scoped>

video { transform: scaleX(-1); }

p { text-align: center; }

</style>

<h1>Snapshot Kiosk</h1>

<section id="splash">

<p id="errorMessage">Loading...</p>

</section>

<section id="app" hidden>

<p><video id="monitor" autoplay></video> <canvas id="photo"></canvas>

<p><input type=button value="📷" onclick="snapshot()">

</section>

<script>

navigator.getUserMedia('video user', gotStream, noStream);

var video = document.getElementById('monitor');

var canvas = document.getElementById('photo');

function gotStream(stream) {

video.src = URL.getObjectURL(stream);

video.onerror = function () {

stream.stop();

};

stream.onended = noStream;

video.onloadedmetadata = function () {

canvas.width = video.videoWidth;

canvas.height = video.videoHeight;

document.getElementById('splash').hidden = true;

document.getElementById('app').hidden = false;

};

}

function noStream() {

document.getElementById('errorMessage').textContent = 'No camera available.';

}

function snapshot() {

canvas.getContext('2d').drawImage(video, 0, 0);

}

</script>

</article>