Abstract

This document defines a set of JavaScript APIs that allow local media,

including audio and video, to be requested from a platform.

Status of This Document

This section describes the status of this document at the time of its publication.

Other documents may supersede this document. A list of current W3C publications and the

latest revision of this technical report can be found in the W3C technical reports index at

http://www.w3.org/TR/.

This document is not complete. It is subject to major changes and, while

early experimentations are encouraged, it is therefore not intended for

implementation. The API is based on preliminary work done in the

WHATWG.

This document was published by the Web Real-Time Communication Working Group and Device APIs Working Group as an Editor's Draft.

If you wish to make comments regarding this document, please send them to

public-media-capture@w3.org

(subscribe,

archives).

All comments are welcome.

Publication as an Editor's Draft does not imply endorsement by the W3C

Membership. This is a draft document and may be updated, replaced or obsoleted by other

documents at any time. It is inappropriate to cite this document as other than work in

progress.

This document was produced by a group operating under the

5 February 2004 W3C Patent

Policy.

W3C maintains a public list of any patent disclosures (Web Real-Time Communication Working Group, Device APIs Working Group)

made in connection with the deliverables of the group; that page also includes

instructions for disclosing a patent. An individual who has actual knowledge of a patent

which the individual believes contains

Essential

Claim(s) must disclose the information in accordance with

section

6 of the W3C Patent Policy.

3. Terminology

- HTML Terms:

-

The EventHandler

interface represents a callback used for event handlers as defined in

[HTML5].

The concepts queue a

task and fires

a simple event are defined in [HTML5].

The terms event

handlers and

event handler event types are defined in [HTML5].

- source

-

A source is the "thing" providing the source of a media stream

track. The source is the broadcaster of the media itself. A source can

be a physical webcam, microphone, local video or audio file from the

user's hard drive, network resource, or static image.

Some sources have an identifier which must be unique to the application (un-guessable by

another application) and persistent between application sessions (e.g.,

the identifier for a given source device/application must stay the

same, but not be guessable by another application). Sources that must

have an identifier are camera and microphone sources; local file

sources are not required to have an identifier. Source identifiers let

the application save, identify the availability of, and directly

request specific sources.

Other than the identifier, other bits of source identity are

never directly available to the application until the

user agent connects a source to a track. Once a source has been

"released" to the application (either via a permissions UI,

pre-configured allow-list, or some other release mechanism) the

application will be able discover additional source-specific

capabilities.

Sources do not have constraints -- tracks

have constraints. When a source is connected to a track, it

must, possibly in combination with UA processing (e.g.,

downsampling), conform to the constraints present on that

track (or set of tracks).

Sources will be released (un-attached) from a track when the track

is ended for any reason.

On the MediaStreamTracksourceType attribute. The behavior

of APIs associated with the source's capabilities and settings change

depending on the source type.

Sources have capabilities and

settings. The capabilities and settings are "owned"

by the source and are common to any (multiple) tracks that happen to be

using the same source (e.g., if two different track objects bound to

the same source ask for the same capability or setting information,

they will get back the same answer).

-

Setting (Source Setting)

-

A setting refers to the immediate, current value of the source's

(optionally constrained) capabilities. Settings are always

read-only.

A source's settings can change dynamically over time due to

environmental conditions, sink configurations, or constraint changes. A

source's settings must always conform to the current set of mandatory

constraints that all of the tracks it is bound to have defined, and

should do its best to conform to the set of optional constraints

specified.

Although settings are a property of the source, they are

only exposed to the application through the tracks attached to

the source. The Constrainable interface provides this

exposure.

A conforming user-agent must

support all the setting names defined in this spec.

As represented in this specification, a source is the

realization of a device as presented by the User Agent. Thus,

it is possible that the actual settings of the device may

differ from those presented by the User Agent. As an example,

there are some operating systems and native device APIs that

will treat a camera with a single native capture resolution as

if it can produce any resolution less than that value,

downsampling as necessary. Even though the camera technically

has only one specific width and one specific height it can

support, it is likely that the User Agent will represent this

camera as a source with a range of supported widths and

heights. To enable the application to determine when this has

occurred, tracks provide both

a getSettings() method (which always

returns a setting that satisfies the constraints applied to

the track) and a getNativeSettings()

method (which always returns, to the best of the User Agent's

determination, the actual setting of the native device). Note

that both the track settings and the native settings are

snapshots and can change without application involvement. In

particular, changes in the native settings could cause changes

in the track settings that would result in the latter values

being outside of the constraints and thus causing

overconstrained events for all affected tracks.

-

Capabilities

-

Source capabilities are the intrinsic "features" of a

source object. For each source setting, there is a

corresponding capability that describes whether it is

supported by the source and if so, what the range of supported

values are. As with settings, capabilities are exposed to the

application via the Constrainable interface.

The values of the supported capabilities must be normalized to the

ranges and enumerated types defined in this specification.

A getCapabilities() call on a track returns the same

underlying per-source capabilities for all tracks connected to

the source.

Source capabilities are effectively constant. Applications should be

able to depend on a specific source having the same capabilities for

any session.

-

Constraints

-

Constraints are an optional track feature for restricting

the range of allowed variability on a source. Without provided

track constraints, implementations are free to select a

source's settings from the full ranges of its supported

capabilities, and to adjust those settings at any time for any

reason.

Constraints are exposed on tracks via

the Constrainable interface, which includes an API for

dynamically changing constraints. Note

that getUserMedia() also permits an initial set of

constraints to be applied when the track is first

obtained.

It is possible for two tracks that share a unique source to

apply contradictory constraints. The Constrainable

interface supports the calling of an error handler when the

conflicting constraint is requested. After successful

application of constraints on a track (and its associated

source), if at any later time the track

becomes overconstrained, the user agent MUST change the

track to the muted state.

A correspondingly-named constraint exists for each

corresponding source setting name and capability name. In

general, user agents will have more flexibility to optimize

the media streaming experience the fewer constraints are

applied, so application authors are strongly encouraged to use

mandatory constraints sparingly.

RTCPeerConnection

RTCPeerConnection is defined in

[WEBRTC10].

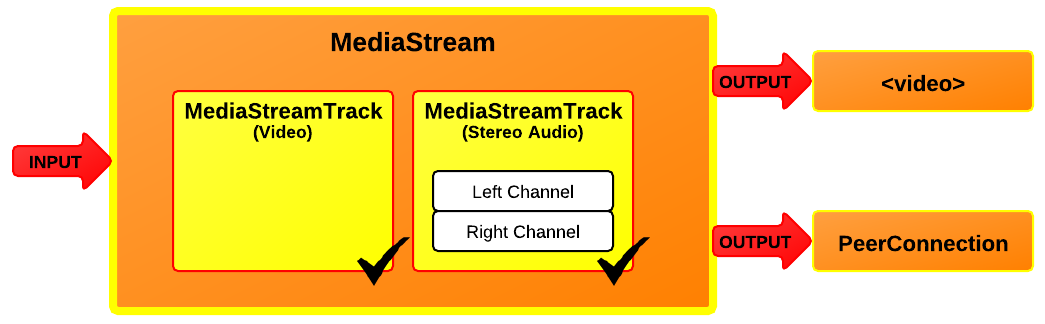

4. MediaStream API

4.1 Introduction

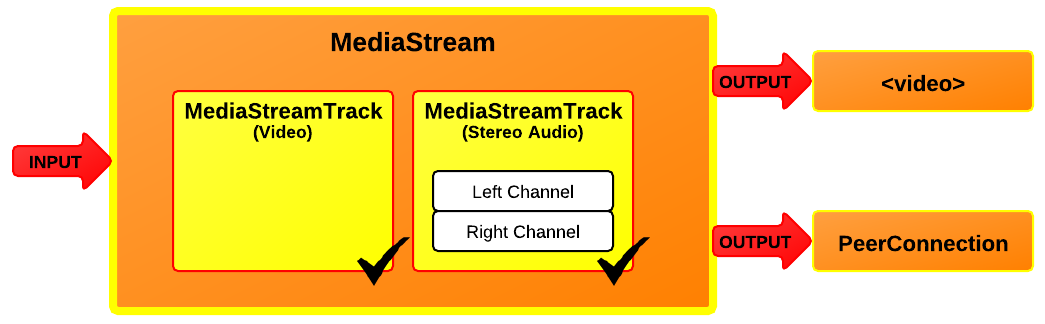

The MediaStreamMediaStream

Each MediaStream

Each track in a MediaStream object has a corresponding

MediaStreamTrack

A MediaStreamTrack

A channel is the smallest unit considered in this API

specification.

A MediaStreamMediaStreamgetUserMedia() call (which is

described later in this document), for instance, might take its input

from the user's local camera. The output of the object controls how the

object is used, e.g., what is saved if the object is written to a file or

what is displayed if the object is used in a video

element.

Each track in a MediaStream

A MediaStream

The output of a MediaStream

A new MediaStreamMediaStream() constructor. The constructor

argument can either be an existing MediaStreamMediaStreamMediaStreamTrack

Both MediaStreamMediaStreamTrackMediaStream

When a MediaStreamMediaStream object is also used in contexts outside

getUserMedia, such as [WEBRTC10]. In both cases, ensuring

a realtime stream reduces the ease with which pages can distinguish live

video from pre-recorded video, which can help protect the user's

privacy.

A MediaStreamTrackMediaStreamTrackgetUserMedia()

.

A script can indicate that a track no longer needs its source with the

MediaStreamTrack.stop() method.

When all tracks using a source have been stopped, the given permission

for that source is revoked and the source is stopped. If the data is being generated from a

live source (e.g., a microphone or camera), then the user agent SHOULD

remove any active "on-air" indicator for that source. If the data is

being generated from a prerecorded source (e.g. a video file), any

remaining content in the file is ignored. An implementation may use a per

source reference count to keep track of source usage, but the specifics

are out of scope for this specification.

A MediaStreamTracknew, live and ended.

A track begins as new prior to being connected to an

active source.

Once connected, the started event fires and

the track becomes live. In the live state,

the track is active and media is available for rendering at a

MediaStream

A muted or disabled MediaStreamTrackMediaStreamTrackMediaStream

The muted/unmuted state of a track reflects if the source provides

any media at this moment. The enabled/disabled state is under

application control and determines if the track outputs media (to its

consumers). Hence, media from the source only flows when a

MediaStreamTrack

A MediaStreamTrackMediaStreamRTCPeerConnection [WEBRTC10], is muted if the

application on the other side disables the corresponding track in the

MediaStream being sent.

Applications are able to enable or

disable a MediaStreamTrack

For a newly created MediaStreamTrack

A MediaStreamTrack

When a MediaStreamTrackstop() method being invoked on

the MediaStreamTrack

-

If the track's readyState attribute

has the value ended already, then abort these steps.

(The stop()

method was probably called just before the track stopped for other

reasons.)

-

Set track's readyState attribute

to ended.

-

Fire a simple event named ended at the object.

If the end of the stream was reached due to a user request, the

event source for this event is the user interaction event source.

4.3.2 Tracks and Constraints

Constraints are set on tracks and may affect sources.

Whether ConstraintsConstrainable Interface allow the retrieval

and manipulation of the constraints currently established on a

track.

Each track maintains an internal version of the

Constraintsconstraints attribute, and may be modified by the

applyConstraints() method.

When applyConstraints() is called, a user agent

MUST queue a task to evaluate

those changes when the task queue is next serviced. Similarly, if the

sourceType

changes, then the user agent MUST perform the same actions to

re-evaluate the constraints of each track affected by that source

change.

If the MediaError

4.3.3 Interface Definition

MediaStreamTrack implements Constrainable;

interface MediaStreamTrack : EventTarget {

readonly attribute DOMString kind;

readonly attribute DOMString id;

readonly attribute DOMString label;

attribute boolean enabled;

readonly attribute boolean muted;

attribute EventHandler onmute;

attribute EventHandler onunmute;

readonly attribute boolean _readonly;

readonly attribute boolean remote;

readonly attribute MediaStreamTrackState readyState;

attribute EventHandler onstarted;

attribute EventHandler onended;

Settings getNativeSettings ();

MediaStreamTrack clone ();

void stop ();

};4.3.3.1 Attributes

enabled of type boolean, -

The MediaStreamTrack.enabled

attribute, on getting, MUST return the last value to which it was

set. On setting, it MUST be set to the new value, and then, if the

MediaStreamTrack

Note

Thus, after a MediaStreamTrackenabled attribute still

changes value when set; it just doesn't do anything with that new

value.

id of type DOMString, readonly -

Unless a MediaStreamTrackid attribute to

that string.

An example of an algorithm that specifies how the track id must

be initialized is the algorithm to represent an incoming network

component with a MediaStreamTrack

MediaStreamTrack.id

attribute MUST return the value to which it was initialized when

the object was created.

kind of type DOMString, readonly -

The MediaStreamTrack.kind

attribute MUST return the string "audio" if the object

represents an audio track or "video" if object

represents a video track.

label of type DOMString, readonly -

User agents MAY label audio and video sources (e.g., "Internal

microphone" or "External USB Webcam"). The MediaStreamTrack.label

attribute MUST return the label of the object's corresponding

track, if any. If the corresponding track has or had no label, the

attribute MUST instead return the empty string.

Note

Thus the kind and label attributes do not

change value, even if the MediaStreamTrack

muted of type boolean, readonly -

The MediaStreamTrack.muted

attribute MUST return true if the track is muted, and false otherwise.

onended of type EventHandler, - This event handler, of type

ended, MUST be supported

by all objects implementing the MediaStreamTrack onmute of type EventHandler, - This event handler, of type

mute, MUST be supported by

all objects implementing the MediaStreamTrack onstarted of type EventHandler, - This event handler, of type

started, MUST be

supported by all objects implementing the

MediaStreamTrack onunmute of type EventHandler, - This event handler, of type

unmute, MUST be supported

by all objects implementing the MediaStreamTrack readonly of type boolean, readonly - If the track (audio or video) is backed by a read-only source

such as a file, or the track source is a local microphone or camera,

but is shared so that constraints applied to the track cannot modify

the source's state, the

readonly attribute

MUST return the value true. Otherwise, it must return

the value false. readyState of type MediaStreamTrackState, readonly -

The readyState

attribute represents the state of the track. It MUST return the

value to which the user agent last set it.

remote of type boolean, readonly - If the track is sourced by an

RTCPeerConnection, the

remote

attribute MUST return the value true. Otherwise, it must

return the value false.

4.3.3.2 Methods

clone-

Clones the given MediaStreamTrack

When the MediaStreamTrack.clone()

method is invoked, the user agent MUST run the following steps:

-

Let trackClone be a newly constructed

MediaStreamTrack

-

Initialize trackClone's id attribute to a newly

generated value.

-

Let trackClone inherit this track's underlying

source, kind, label and

enabled

attributes, as well as its currently active constraints.

-

Return trackClone.

No parameters.

getNativeSettings- The getNativeSettings() method returns the

native settings of all the properties of the object. Note

that the actual setting of a property must be a single value. Unlike the

return value from the

getSettings()

method, this return object a) MUST reflect, to the best of

the User Agent's ability, the actual native settings of the

source device, b) MAY have values that do not match the

current composite set of constraints applied by all tracks

associated with this source, only to the extent necessary to

reflect the native settings of the source device, and c)

MUST be the same for all tracks associated with this same

source.No parameters.

stop-

When a MediaStreamTrackstop() method is

invoked, the user agent MUST run following steps:

-

Let track be the current

MediaStreamTrack

-

If track has no source attached

(sourceType is "none") or if the source is

provided by an RTCPeerConnection, then abort these

steps.

-

Set track's readyState

attribute to ended.

-

Detach track's source.

If no other MediaStreamTrack

The task source for the tasks

queued for the stop() method is the DOM

manipulation task source.

No parameters.

Return type: void

enum MediaStreamTrackState {

"new",

"live",

"ended"

};| Enumeration description |

|---|

new | The track type is new and has not been initialized (connected to

a source of any kind). This state implies that the track's label will

be the empty string. |

live |

The track is active (the track's underlying media source is

making a best-effort attempt to provide data in real time).

The output of a track in the live state can be

switched on and off with the enabled attribute.

|

ended |

The track has ended (the track's

underlying media source is no longer providing data, and will never

provide more data for this track). Once a track enters this state,

it never exits it.

For example, a video track in a MediaStream

|

4.3.4 Track Source Types

enum SourceTypeEnum {

"none",

"camera",

"microphone"

};| Enumeration description |

|---|

none | This track has no source. This is the case when the track is in

the "new" or "ended"

readyState. |

camera | A valid source type only for video

MediaStreamTrack |

microphone | A valid source type only for audio

MediaStreamTrack |

5. The model: sources, sinks, constraints, and states

Browsers provide a media pipeline from sources to sinks. In a browser,

sinks are the <img>, <video> and <audio> tags.

Traditional sources include streamed content, files,

and web resources. The media produced by these sources typically does not

change over time - these sources can be considered to be static.

The sinks that display these sources to the user (the actual tags

themselves) have a variety of controls for manipulating the source content.

For example, an <img> tag scales down a huge source image of

1600x1200 pixels to fit in a rectangle defined with

width="400" and height="300".

The getUserMedia API adds dynamic sources such as microphones and

cameras - the characteristics of these sources can change in response to

application needs. These sources can be considered to be dynamic in nature.

A <video> element that displays media from a dynamic source can

either perform scaling or it can feed back information along the media

pipeline and have the source produce content more suitable for display.

Note

Note: This sort of feedback loop is obviously just

enabling an "optimization", but it's a non-trivial gain. This

optimization can save battery, allow for less network congestion,

etc...

Note that MediaStream sinks (such as

<video>, <audio>, and even

RTCPeerConnection) will continue to have mechanisms to further

transform the source stream beyond that which the Settings,

Capabilities, and Constraints described in this specification

offer. (The sink transformation options, including those of

RTCPeerConnection, are outside the scope of this

specification.)

The act of changing or applying a track constraint may affect the

settings of all tracks sharing that source and

consequently all down-level sinks that are using that source. Many sinks

may be able to take these changes in stride, such as the

<video> element or RTCPeerConnection.

Others like the Recorder API may fail as a result of a source setting

change.

The RTCPeerConnection is an interesting object because it

acts simultaneously as both a sink and a source for

over-the-network streams. As a sink, it has source transformational

capabilities (e.g., lowering bit-rates, scaling-up or down resolutions,

adjusting frame-rates), and as a source it could have its own states

changed by a track source (though in this specification sources with the

remote attribute set to true do not consider the

current constraints applied to a track).

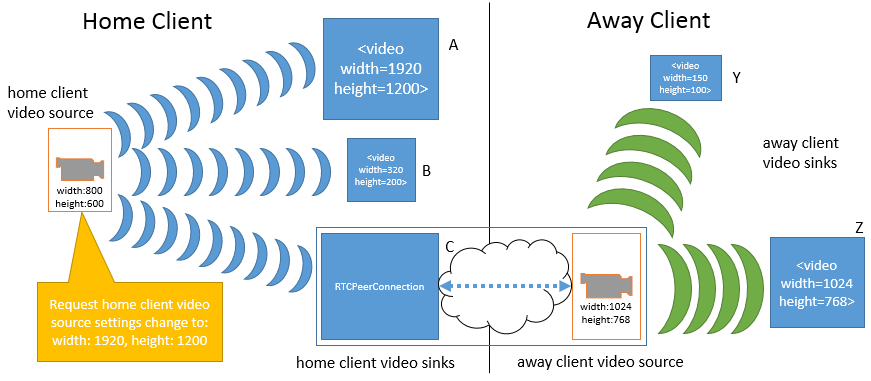

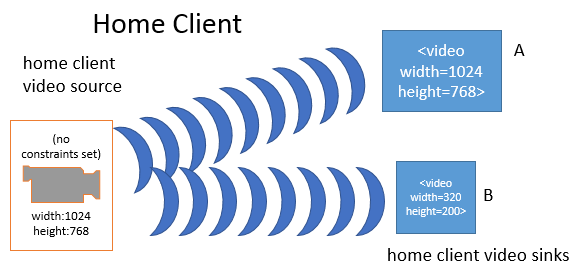

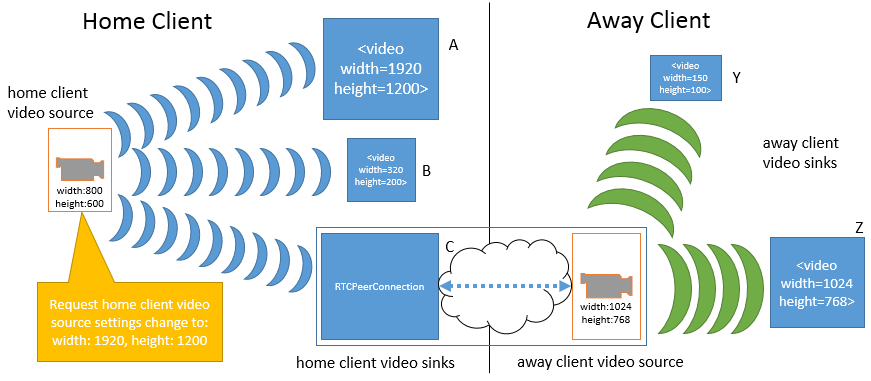

To illustrate how changes to a given source impact various

sinks, consider the following example. This example only uses

width and height, but the same principles apply to any of

the Settings exposed in this specification. In the first

figure a home client has obtained a video source from its local

video camera. The source's width and height settings are 800 pixels

by 600 pixels, respectively. Three MediaStreamsourceId. The three media streams are connected to

three different sinks: a <video> element (A), another

<video> element (B), and a peer connection (C). The peer

connection is streaming the source video to an away client. On the away

client there are two media streams with tracks that use the peer connection

as a source. These two media streams are connected to two

<video> element sinks (Y and Z).

Note that at this moment, all of the sinks on the home client must apply

a transformation to the original source's provided dimension settings. A is

scaling the video up (resulting in loss of quality), B is scaling the video

down, and C is also scaling the video up slightly for sending over the

network. On the away client, sink Y is scaling the video way down, while

sink Z is not applying any scaling.

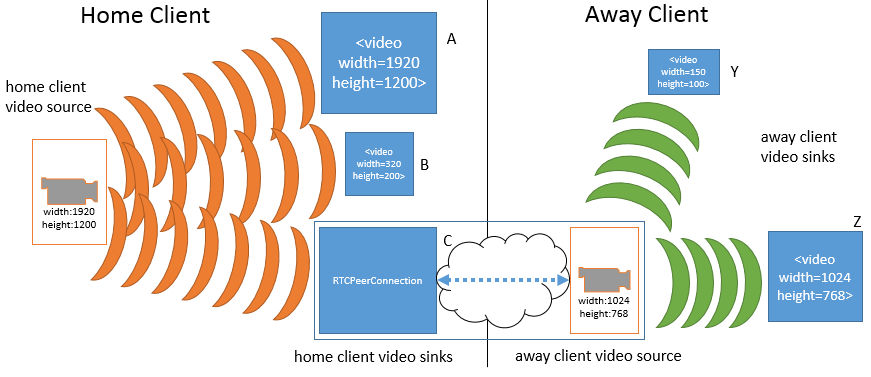

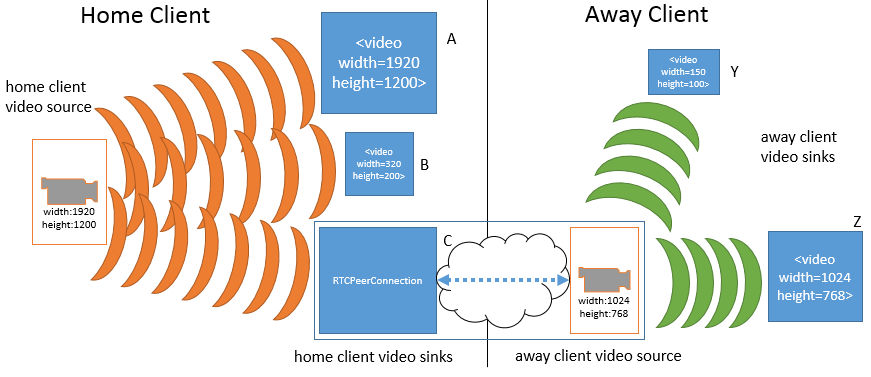

Using the Constrainable interface, one of the tracks

requests a higher resolution (1920 by 1200 pixels) from the home

client's video source.

Note that the source change immediately affects all of the

tracks and sinks on the home client, but does not impact any of

the sinks (or sources) on the away client. With the increase in

the home client source video's dimensions, sink A no longer has to

perform any scaling, while sink B must scale down even further

than before. Sink C (the peer connection) must now scale down the

video in order to keep the transmission constant to the away

client.

While not shown, an equally valid settings change request could be made

of the away client video source (the peer connection on the away client's

side). This would not only impact sink Y and Z in the same manner as

before, but could lead to re-negotiation with the peer connection on the

home client in order to alter the transformation that it is applying to the

home client's video source. Such a change is NOT REQUIRED to change

anything related to sink A or B or the home client's video source.

Note that this specification does not define a mechanism by which a

change to the away client's video source could automatically trigger a

change to the home client's video source. Implementations may choose to

make such source-to-sink optimizations as long as they only do so within

the constraints established by the application, as the next example

demonstrates.

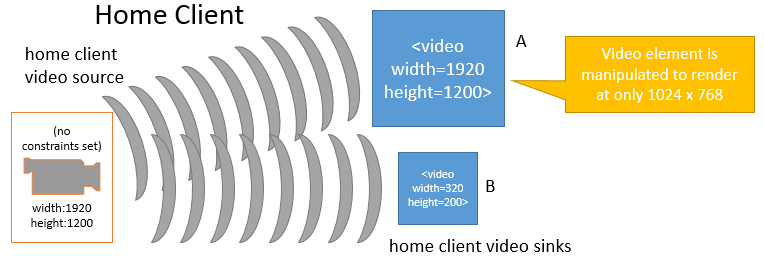

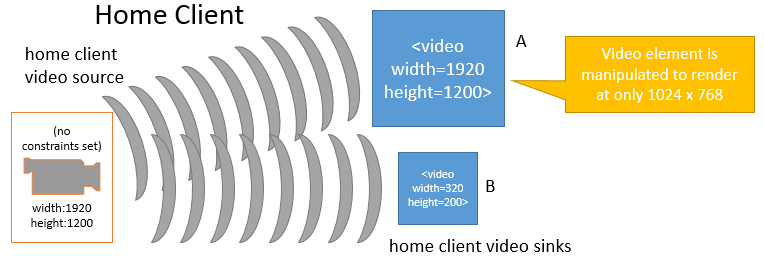

It is fairly obvious that changes to a given source will impact sink

consumers. However, in some situations changes to a given sink may also be

cause for implementations to adjust a source's settings.

This is illustrated in the following figures. In the first figure

below, the home client's video source is sending a video stream sized at

1920 by 1200 pixels. The video source is also unconstrained, such that the

exact source dimensions are flexible as far as the application is

concerned. Two MediaStreamsourceId, and those

MediaStream<video> element sinks A and B. Sink A has been sized to

width="1920" and height="1200" and is displaying

the source's video content without any transformations. Sink B has been

sized smaller and, as a result, is scaling the video down to fit its

rectangle of 320 pixels across by 200 pixels down.

When the application changes sink A to a smaller dimension (from 1920 to

1024 pixels wide and from 1200 to 768 pixels tall), the browser's media

pipeline may recognize that none of its sinks require the higher source

resolution, and needless work is being done both on the part of the source

and on sink A. In such a case and without any other constraints forcing the

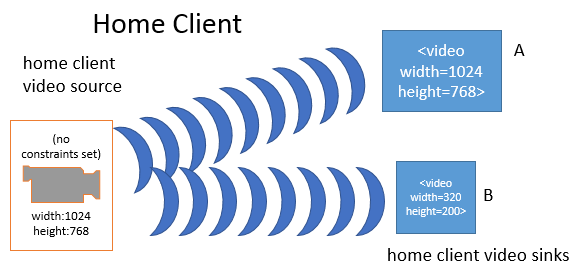

source to continue producing the higher resolution video, the media

pipeline MAY change the source resolution:

In the above figure, the home client's video source resolution was

changed to the greater of that from sinkA and from sinkB in order to

optimize playback. While not shown above, the same behavior could apply to

peer connections and other sinks.

It is possible that constraints can be applied to a

track which a source is unable to satisfy, either because the

source itself cannot satisfy the constraint or because the source

is already satisfying a conflicting constraint. When this happens,

the applyConstraints() call will fail and call the

user-provided ConstraintErrorCallback, without applying any

of the new constraints. Since no change in constraints occurs in

this case, there is also no required change to the source itself

as a result of this condition. Here is an example of this

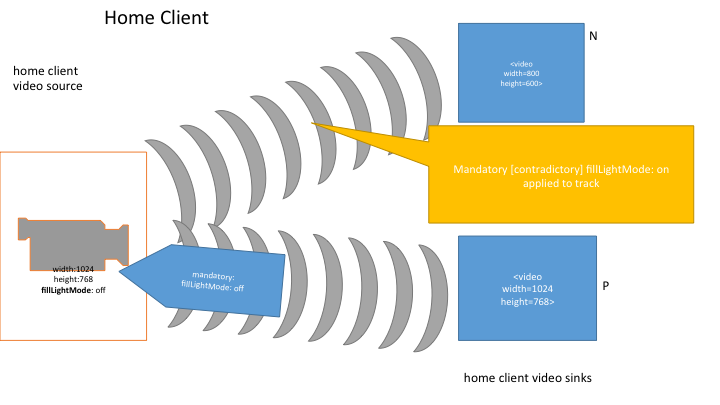

behavior.

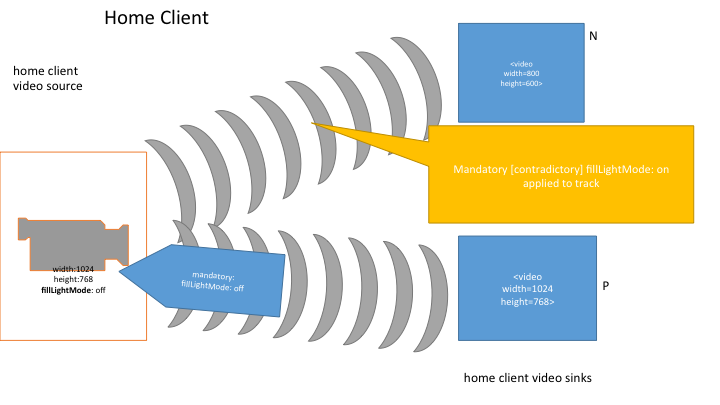

In this example, two media streams each have a video track that

share the same source. The first track initially has no

constraints applied. It is connected to sink N. Sink N has a

width and height of 800 by 600 pixels and is scaling down the

source's resolution of 1024 by 768 to fit. The other track has a

mandatory constraint forcing off the source's fill light; it is

connected to sink P. Sink P has a width and height equal to that

of the source.

Now, the first track adds a mandatory constraint that the fill

light should be forced on. At this point, both mandatory

constraints cannot be satisfied by the source (the fill light

cannot be simultaneously on and off at the same time). Since this

state was caused by the first track's attempt to apply a

conflicting constraint, the constraint application fails and there

is no change in the source's settings or the constraints on either

track.

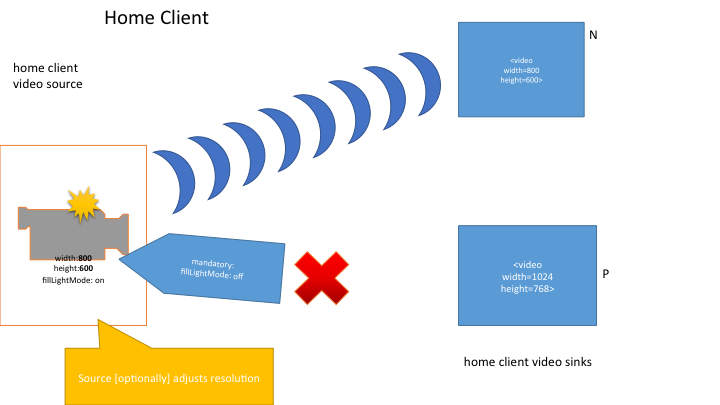

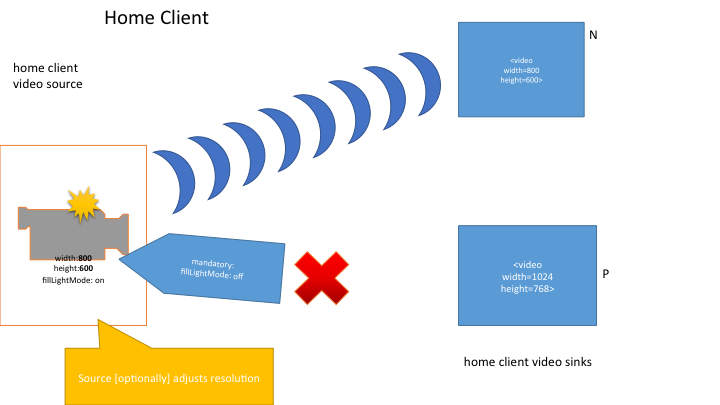

Let's look at a slightly different situation starting from the

same point. In this case, instead of the first track attempting

to apply a conflicting constraint, the user physically locks the

camera into a mode where the fill light is on. At this point the

source can no longer satisfy the second track's mandatory

constraint that the fill light be off. The second track is

transitioned into the muted state and receives

an overconstrained event. At the same time, the source

notes that its remaining active sink only requires a resolution of

800 by 600 and so it adjusts its resolution down to match (this is

an optional optimization that the user agent is allowed to make

given the situation).

At this point, it is the responsibility of the application to

address the problem that led to the overconstrained situation,

perhaps by removing the fill light mandatory constraint on the

second track or by closing the second track altogether and

informing the user

7. Error Handling

All errors defined in this specification implement the following

interface:

[NoInterfaceObject]

interface MediaError {

readonly attribute DOMString name;

readonly attribute DOMString? message;

readonly attribute DOMString? constraintName;

};7.1 Attributes

constraintName of type DOMString, readonly , nullable-

This attribute is only used for some types of errors. For

MediaErrorConstraintNotSatisfiedError, this attribute MUST be set to

the name of the constraint that caused the error.

message of type DOMString, readonly , nullable- A UA-dependent string offering extra human-readable information about

the error.

name of type DOMString, readonly -

The name of the error

Note

Open Issue: We may make MediaError inherit from DOMError once the

definition of DOMError is stable.

Note

Open Issue: Do we want to allow the constraintName attribute to contain

multiple constraint names? In many cases the error is raised as soon as a

single unsatisfied mandatory constraint is found, but in others it may be

possible to determine that multiple constraints are not satisfied.

The following interface is defined for cases when a MediaError is raised

as an event:

dictionary MediaErrorEventInit : EventInit {

MediaError error;

};

[ Constructor (DOMString type, MediaErrorEventInit eventInitDict)]

interface MediaErrorEvent : Event {

readonly attribute MediaError error;

};7.2 Constructors

MediaErrorEvent- TODO

7.3 Attributes

error of type MediaError, readonly - TODO

9. Enumerating Local Media Devices

This section describes an API that the script can use to query the user

agent about connected media input and output devices.

9.2 Device Info

callback MediaDeviceInfoCallback = void (sequence<MediaDeviceInfo> deviceInfoList);

Note

The old SourceInfo dictionary used to refer to the MediaSourceStates

dictionary. That dictionary is no longer available when Constrainable

is introduced. When Constrainable lands, we should see if we can align

deviceId, kind and label, below, with the new definitions of source

capabilities.

dictionary MediaDeviceInfo {

DOMString deviceId;

MediaDeviceKind kind;

DOMString label;

DOMString groupId;

};

enum MediaDeviceKind {

"audioinput",

"audiooutput",

"videoinput"

};| Enumeration description |

|---|

audioinput |

Represents an audio input device; for example a microphone.

|

audiooutput |

Represents an audio output device; for example a pair of

headphones.

|

videoinput |

Represents a video input device; for example a webcam.

|

10. Obtaining local multimedia content

11. Constrainable Interface

The Constrainable interface allows its consumers to inspect and adjust

the properties of the object that implements it. It is broken out as a

separate interface so that it can be used in other specifications. The core

concept is that of a Capability, which consists of a property or feature of

an object and the set of its possible values, which may be specified either

as a range or as an enumeration. For example, a camera might be capable of

framerates (a property) between 20 and 50 frames per second (a range) and

may be able to be positioned (a property) facing towards the user, away

from the user, or to the left or right of the user (an enumerated set.) The

application can examine a Constrainable object's set of Capabilities via

the getCapabilities() accessor.

The application can select the (range of) values it wants for an

object's Capabilities by means of one or more ConstraintSets and the

applyConstraints() method. A ConstraintSet consists of the

names of one or more properties of the object plus the desired value (or a

range of desired values) for each of them. Each of those property/value

pairs can be considered to be an individual constraint. For example, the

application may set a ConstraintSet containing two constraints, the first

stating that the framerate of a camera be between 30 and 40 frames per

second (a range) and the second that the camera should be facing the user

(a specific value). ConstraintSets can be mandatory or optional. In the

case of optional ConstraintSets, the UA will consider the ConstraintSets in

the order in which they are specified, and will try to satisfy each one,

but will ignore a ConstraintSet if it cannot satisfy it. In the case of a

mandatory ConstraintSet, the UA will try to satisfy it, and will call the

errorCallback if it cannot do so. For example, suppose that an

application applies three individual constraints, one stating that the

video aspect ratio should be 3 to 2 (height to width), the next that the

height should be 600 and the last that the width should be 500. Since these

constraints interact with each other (the aspect ratio affects the possible

values for height and width, and vice-versa) it is impossible to satisfy

all three of them, so if they are all contained in a mandatory

ConstraintSet, the UA will call the errorCallback. However if

any one of the constraints is placed in an optional ConstraintSet, the

other two can be satisfied, so the UA will satisfy the two mandatory ones,

silently ignore the optional one, and call the

successCallback.

The ordering of optional ConstraintSets is significant. In the example

in the previous paragraph, suppose that aspect ratio constraint is part of

a mandatory ConstraintSet and that the height and width constraints are

part of separate optional ConstraintSets. If the height ConstraintSet is

specified first (and the other constraints in the ConstraintSet can also be

satisfied), then it will be satisfied and the width ConstraintSet will be

ignored. Thus the height will be set to 600 and the the width will be set

to 400. On the other hand, if width is specified before height, the width

ConstraintSet will be satisfied and the height ConstraintSet will be

ignored, resulting in width of 500 and height of 750. (Note that the

mandatory aspect ratio constraint is enforced in both cases.) The UA will

attempt to satisfy as many optional ConstraintSets as it can, even if some

of them cannot be satisfied and must therefore be ignored. Application

authors can therefore implement a backoff strategy by specifying multiple

optional ConstraintSets for the same property. For example, an application

might specify three optional ConstraintSets, the first asking for a

framerate greater than 500, the second asking for a framerate greater than

400, and the third asking for one greater than 300. If the UA is capable of

setting a framerate greater than 500, it will (and the subsequent two

ConstraintSets will be trivially satisfied.) However, if the UA cannot set

the framerate above 500, it will ignore that ConstraintSet and attempt to

set the framerate above 400. If that fails, it will then try to set it

above 300. If the UA cannot satisfy any of the three ConstraintSets, it

will set the framerate to any value it can get. If the developer wanted to

insist on 300 as a lower bound, he put that in a mandatory ConstraintSet.

In that case, the UA would fail altogether if it couldn't get a value over

300, but would choose a value over 500 if possible, then try for a value

over 400. An application may inspect the set of ConstraintSets currently in

effect via the getConstraints() accessor.

The specific value that the UA chooses for a Capability is referred to

as a Setting. For example, if the application applies a ConstraintSet

specifying that the framerate must be at least 30 frames per second, and no

greater than 40, the Setting can be any intermediate value, e.g., 32, 35,

or 37 frames per second. The application can query the current settings of

the object's Capabilities via the getSettings()

accessor.

11.1 Interface Definition

[NoInterfaceObject]

interface Constrainable {

Capabilities getCapabilities ();

Constraints getConstraints ();

Settings getSettings ();

void applyConstraints (Constraints constraints, VoidFunction successCallback, ConstraintErrorCallback errorCallback);

attribute EventHandler onoverconstrained;

};11.1.1 Attributes

onoverconstrained of type EventHandler, - This event handler, of type

overconstrained,

must be supported by all objects

implementing the ConstrainableThe UA must raise a

MediaErrorEvent named "overconstrained" if changing

circumstances at runtime result in it no longer

being able to satisfy the currently valid mandatory

ConstraintSet. This MediaErrorEvent

must contain a MediaError whose

name is "overconstrainedError", and whose

constraintName attribute is set to one of the mandatory

constraints that can no longer be satisfied. The message

attribute of the MediaError SHOULD contain a string that is useful

for debugging. The conditions under which this error might occur are

platform and application-specific. For example, the user might

physically manipulate a camera in a way that makes it impossible to

provide a resolution that satisfies the constraints. The UA MAY take

other actions as a result of the overconstrained situation.

11.1.2 Methods

applyConstraints-

The applyConstraints() algorithm for applying

constraints is stated below. Here are some preliminary definitions

that are used in the statement of the algorithm:

- We refer to each immediate attribute of a ConstraintSet

(as defined by

HasOwnProperty) as a 'constraint' since it is intended to constrain the

corresponding Capability of the Constrainable object to a value that is

within the range or list of values it specifies.

- We refer to the "effective Capability" C of an object O as the possibly

proper subset of the possible values of C (as returned by getCapabilities)

taking into consideration environmental limitations and/or restrictions

placed by other constraints. For example given a ConstraintSet that

constrains Capabilities aspectRatio, height and width, the values assigned

to any two of the Capabilities limit the effective Capability of the third.

The set of effective Capabilities may be platform dependent. For example,

on a resource-limited device it may not be possible to set Capabilities C1

and C2 both to 'high', while on another less limited device, this may be

possible.

- A set of values for the Capabilities of an object O satisfy ConstraintSet CS

if each value a) is in the range of the corresponding effective Capability

of O, and b) is in the range or list of values specified by the

corresponding constraint in CS, if there is one, and c) there is no

constraint in CS that does not correspond to a Capability of O. (Note that

although this definition ignores the difference between mandatory and

optional ConstraintSets, the algorithm below distinguishes between them.)

- A set of ConstraintSets CS1...CSn (n ≥ 1) can be satisfied by an object O

if it is possible to choose a sequence of values for the Capabilities of O

that satisfy CS1...CSn simultaneously.

- To apply a set of ConstraintSet CS1...CSn to object O is to choose such a

sequence of values that satisfy CS1...CSn and assign them as the settings

for the properties of O.

When applyConstraints is called, the UA must queue a task to run the following

steps:

- let newContraints be the argument to this

function. Each constraint must specify one or more values (or a range of

values) for its property. A property may appear more than once in the list of optional

ConstraintSets.

- Let object be the Constrainable object on which this

method was called. Let copy be an unconstrained copy of

object (i.e., copy should behave as if it

were object with all ConstraintSets removed.)

- If the mandatory ConstraintSet in desiredConstraints is

non-null and

cannot be satisfied by copy, call the

errorCallback, passing it a new

MediaError with name

ConstraintNotSatisfied and constraintName

set to any of the mandatory constraints that could not be

satisfied, and return. existingConstraints remain in

effect in this case.

- Let successfullConstraints be a list of ConstraintSets,

initially containing only the mandatory ConstraintSet.

Iterate over the optional ConstraintSets in

newConstraints in the order in which they were

specified. For each ConstraintSet,if it and

successfullConstraints together can be satisfied by

copy, append it to the rear of successfullConstraints.

Otherwise, ignore it.

- In a single operation, remove existingConstraints from

object, apply successfullConstraints, and fire the

successCallback. From this point on until applyConstraints() is

called successfully again, getConstraints() must return the newConstraints that

were passed as an argument

to this call.

The UA may choose new settings

for the Capabilities of the object at any time. When it does so

it must attempt to satisfy

first the mandatory ConstraintSet and then the optional ConstraintSets

in the order in which they were specified, as described in the algorithm above.

| Parameter | Type | Nullable | Optional | Description |

|---|

| constraints | Constraints | ✘ | ✘ | A new constraint structure to apply to this object. |

| successCallback | VoidFunction | ✘ | ✘ | Called if the mandatory ConstraintSet can be satisfied. |

| errorCallback | ConstraintErrorCallback | ✘ | ✘ | Called if the mandatory ConstraintSet cannot be satisfied. |

Return type: void

getCapabilities-

The getCapabilities() method returns the dictionary of

the capabilities that the object supports.

Note

It is possible that the underlying hardware may not exactly map

to the range defined in the registry entry. Where this is possible,

the entry should define how

to translate and scale the hardware's setting onto the values

defined in the entry. For example, suppose that a registry entry

defines a hypothetical fluxCapacitance capability that is defined

to be the range from -10 (min) to 10 (max), but there are common

hardware devices that support only values of "off" "medium" and

"full". The registry entry might specify that for such hardware,

the user agent should map the range value of -10 to "off", 10 to

"full", and 0 to "medium". It might also indicate that given a

ConstraintSet imposing a strict value of 3, the user agent should

attempt to set the value of "medium" on the hardware, and and that

getSettings() should return a fluxCapacitance

of 0, since that is the value defined as corresponding to

"medium".

No parameters.

getConstraints- The getConstraints method returns the Constraints

that were the argument to the

last successful call of

applyConstraints(), maintaining

the order in which they were specified.

Note that some of

the optional ConstraintSets returned may not be currently satisfied.

To check which ConstraintSets are currently in effect, the application

should use getSettings.

No parameters.

getSettings- The getSettings() method returns the current

settings of all the properties of the object, whether they are

platform defaults or have been set

by

applyConstraints(). The values returned for

the properties of the object MUST lie within the currently

satisfied set of constraints applied to this object. Note that

the actual setting of a property must be a single value.

No parameters.

11.1.3 applyConstraints Failure Callback

ConstraintErrorCallback

callback ConstraintErrorCallback = void (MediaError error);

error of type MediaError- An

MediaError holding the mandatory constraint

that could not be satisfied.

An example of Constraints that could be passed into

applyConstraints() or returned as a value of

constraints is below. It uses the properties defined

in the Track property registry.

Example 1

{

"mandatory": {

"width": {

"min": 640

},

"height": {

"min": 480

}

},

"optional": [{

"width": 650

}, {

"width": {

"min": 650

}

}, {

"frameRate": 60

}, {

"width": {

"max": 800

}

}, {

"facingMode": "user"

}]

}Here is another example, specifically for a video track:

Example 2

{

"optional": [{

sourceId: "20983-20o198-109283-098-09812"

}, {

width: {

min: 800,

max: 1200

}

}, {

height: {

min: 600

}

}]

}And here's one for an audio track:

Example 3

{

optional: [{

sourceId: "64815-wi3c89-1839dk-x82-392aa"

}, {

gain: 0.5

}]

}11.2 The Property Registry

There is a single IANA registry that

defines the constrainable

properties of all objects that implement Constrainable. The

registry entries must contain the

name of each property along with its set of legal values. The registry entries

for MediaStreamTrack are defined below. The

syntax for the specification of the set of legal values depends on the

type of the values.

In addition to the standard atomic types (boolean, long, double,

DOMString), legal values include lists of any of the atomic types, plus

min-max ranges, as defined below.

List values must be interpreted

as disjunctions. For example, if a property 'facingMode' for a camera is

defined as having legal values ["left", "right", "user", "environment"],

this means that 'facingMode' can have the value "left", the value

"right", the value "environment" or the value "user". Similarly

Constraints restricting 'facingMode' to ["user", "left", "right"]

would mean that the UA should select a camera (or point the camera, if

that is possible) so that "facingMode" is either "user", "left", or

"right". This Constraint would thus request that the camera not be facing

away from the user, but would allow the UA to choose among the other

directions.

11.2.2 PropertyValueDoubleRange

dictionary PropertyValueDoubleRange {

double max;

double min;

};max of type double- The maximum legal value of this property.

min of type double- The minimum value of this Property.

11.2.3 PropertyValueLongRange

dictionary PropertyValueLongRange {

long max;

long min;

};max of type long- The maximum legal value of this property.

min of type long- The minimum value of this Property.

11.3 Capabilities

Capabilities are dictionary containing one or more

key-value pairs, where each key must be a constrainable property defined in the

registry, and each value must be

a subset of the set of values defined for that property in the registry.

The exact syntax of the value expression depends on the type of the

property but is of type ConstraintValues

An example of a Capabilities dictionary is shown below. This example

is not very realistic in that a browser would actually be required to

support more settings that just these.

Example 4

{

"frameRate": {

"min": 1.0,

"max": 60.0

},

"facingMode": ["user", "environment"]

}11.4 Settings

A Setting is a dictionary containing one or more key-value

pairs. It must contain each key

returned in getCapabilities(). There must be a single value for each key and the value

must a member of the set defined

for that property by capabilities(). The exact syntax of the

value expression depends on the type of the property. It will be a

DomString for properties of type PropertyValueSet, it will be a long for

properties of type PropertyValueLongRange , it will be a double for

properties of type PropertyValueDoubleRange. Thus the

Settings dictionary contains the actual values that the UA

has chosen for the object's Capabilities.

An example of a Setting dictionary is shown below. This example is not

very realistic in that a browser would actually be required to support

more settings that just these.

Example 5

{

"frameRate": 30.0,

"facingMode": "user"

}11.5 Constraints

dictionary Constraints {

ConstraintSet mandatory;

sequence<ConstraintSet> optional;

};11.5.1 Dictionary Constraints Members

mandatory of type ConstraintSet-

The set of constraints that the UA must satisfy or else call the

errorCallback.

optional of type sequence<ConstraintSet>-

The list of ConstraintSets that the UA should try to satisfy but may ignore if they cannot be satisfied. The order of

these ConstraintSets is significant. In particular, when they are

passed as an argument to applyConstraints, the UA

must try to satisfy them in the

order that is specified. Thus if optional ConstraintSets C1 and C2

can be satisfied individually, but not together, then whichever of C1

and C2 is first in this list will be satisfied, and the other will

not. The UA must attempt to

satisfy all optional ConstraintSets in the list, even if some cannot

be satisfied. Thus, in the preceding example, if optional constraint

C3 is specified after C1 and C2, the UA will attempt to satisfy C3

even though C2 cannot be satisfied. Note that a given property name

may occur multiple times in these sets.

Each property of a ConstraintSet

corresponds to a Capability and specifies a subset of its legal values.

Applying a ConstraintSet instructs that UA to restrict the setting of the

corresponding Capabilities to the specified values or ranges of values. A

given property MAY occur both in the mandatory and the optional

ConstraintSets list, and MAY occur more than once in the optional

ConstraintSets list.

11.5.2 ConstraintSet

Note

Open Issue: Do we need to add support for a boolean type in

constraints? Is the ConstraintValue below an OK addition which will

allow constraints like { width: 66 } as a short hand for min=max=66.

typedef (DOMString or long or double or boolean) ConstraintValue;

typedef (ConstraintValue or PropertyValueSet or PropertyValueLongRange or PropertyValueDoubleRange) ConstraintValues;

typedef object ConstraintSet;

Throughout this specification, the identifier ConstraintSet is used to refer to the object type.

In ECMAScript, ConstraintSet objects are represented using regular

native objects with optional properties whose names represent

constraint name. The conversion from an ECMAScript value, representing

a ConstraintSet, to an IDL ConstraintSet must not fail if a property name in the ECMAScript value

does not match any of the Capabilities of the Constrainable object.

In ECMAScript, all the properties of the ConstraintSet object are

optional; the developer may specify any of these properties when

creating the object. Note, however, that unknown property names will

result in a ConstraintSet that can not be satisfied, as described in

applyConstraints() above.

12. Examples

This sample code exposes a button. When clicked, the button is

disabled and the user is prompted to offer a stream. The user can cause

the button to be re-enabled by providing a stream (e.g., giving the page

access to the local camera) and then disabling the stream (e.g., revoking

that access).

Example 6

<input type="button" value="Start" onclick="start()" id="startBtn">

<script>

var startBtn = document.getElementById('startBtn');

function start() {

navigator.getUserMedia({

audio: true,

video: true

}, gotStream, logError);

startBtn.disabled = true;

}

function gotStream(stream) {

stream.oninactive = function () {

startBtn.disabled = false;

};

}

function logError(error) {

log(error.name + ": " + error.message);

}

</script>

This example allows people to take photos of themselves from the local

video camera. Note that the forthcoming Image Capture specification may

provide a simpler way to accomplish this.

Example 7

<article>

<style scoped>

video { transform: scaleX(-1); }

p { text-align: center; }

</style>

<h1>Snapshot Kiosk</h1>

<section id="splash">

<p id="errorMessage">Loading...</p>

</section>

<section id="app" hidden>

<p><video id="monitor" autoplay></video> <canvas id="photo"></canvas>

<p><input type=button value="📷" onclick="snapshot()">

</section>

<script>

navigator.getUserMedia({

video: true

}, gotStream, noStream);

var video = document.getElementById('monitor');

var canvas = document.getElementById('photo');

function gotStream(stream) {

video.srcObject = stream;

stream.oninactive = noStream;

video.onloadedmetadata = function () {

canvas.width = video.videoWidth;

canvas.height = video.videoHeight;

document.getElementById('splash').hidden = true;

document.getElementById('app').hidden = false;

};

}

function noStream() {

document.getElementById('errorMessage').textContent = 'No camera available.';

}

function snapshot() {

canvas.getContext('2d').drawImage(video, 0, 0);

}

</script>

</article>

13. Error Names

This specification defines the following new error names

- PermissionDeniedError: User denied permission for scripts from this

origin to access the media device.

- ConstraintNotSatisfiedError: One of the mandatory constraints could

not be satisfied.

- OverconstrainedError: Due to changes in the environment, one or more

mandatory constraints can no longer be satisfied.

14. IANA Registrations

14.1 Track Property Registrations

IANA is requested to register the following properties as specified in

[RTCWEB-CONSTRAINTS]:

The following constraint names are defined to apply to both

video and audio MediaStreamTrack

| Property Name |

Values |

Notes |

| sourceType

|

SourceTypeEnum

|

The type of the source of the MediaStreamTrack.

Note that the setting of this property is uniquely determined by the source that is attached to the Track. In particular,

getCapabilities() will return only a single value for sourceID/Type.

This property can therefore be used for initial media selection with getUserMedia(). However is not useful for subsequent media control with applyConstraints, since any attempt to

set a different value will result in an unsatisfiable ConstraintSet. .

|

| sourceId

|

DOMString |

The application-unique identifier for this source. The same

identifier MUST be valid between sessions of this application, but

MUST also be different for other applications. Some sort of GUID is

recommended for the identifier.

Note that the setting of this property is uniquely determined by the source that is attached to the Track. In particular,

getCapabilities() will return only a single value for sourceID/Type.

This property can therefore be used for initial media selection with getUserMedia(). However is not useful for subsequent media control with applyConstraints, since any attempt to

set a different value will result in an unsatisfiable ConstraintSet. |

The following properties are defined to apply only to video

MediaStreamTrack

| Property Name |

Values |

Notes |

| width

|

PropertyValueLongRange

|

The width or width range, in pixels, of the video source. As a

capability, the range should span the video source's pre-set width

values with min being the smallest width and max being the largest

width. |

| height

|

PropertyValueLongRange

|

The height or height range, in pixels, of the video source. As

a capability, the range should span the video source's pre-set

height values with min being the smallest height and max being the

largest height. |

| frameRate

|

PropertyValueDoubleRange

|

The exact desired frame rate (frames per second) or frameRate

range of the video source. If the source does not natively provide

a frameRate, or the frameRate cannot be determined from the source

stream, then this value MUST refer to the user agent's vsync

display rate. |

| aspectRatio

|

PropertyValueDoubleRange

|

The exact aspect ratio (width in pixels divided by height in

pixels), represented as a double rounded to the tenth decimal

place. |

| facingMode

|

PropertyValueSet

|

The members of the enum describe the directions that the camera

can face, as seen from the user's perspective. Valid values for the

strings in the PropertyValueSet are the values of enum

VideoFacingModeEnum. |

enum VideoFacingModeEnum {

"user",

"environment",

"left",

"right"

};| Enumeration description |

|---|

user | The source is facing toward the user (a self-view camera). |

environment | The source is facing away from the user (viewing the

environment). |

left | The source is facing to the left of the user. |

right | The source is facing to the right of the user. |

Below is an illustration of the video facing modes in relation to the

user.

The following properties are defined to apply only to audio

MediaStreamTrack

| Property Name |

Values |

Notes |

| volume |

PropertyValueDoubleRange

|

The volume or volume range of the audio source, as a

percentage. A volume of 0.0 is silence, while a volume of 1.0 is the

maximum supported volume. Note that any ConstraintSet that specifies values

outside of this range can never be satisfied. |

| sampleRate |

PropertyValueLongRange

|

The sample rate in samples per second for the audio data. |

| sampleSize |

PropertyValueLongRange

|

The linear sample size in bits. This constraint can only be

satisfied for audio devices that produce linear samples. |

| echoCancelation |

boolean

|

When one or more audio streams is being played in the proceses

of varios microphones, it is often desirable to attempt to remove

the sound being played from the input signals recorded by the

microphones. This is referred to echo cancelation. There are cases

where it is not needed and it is desirable to turn it off so that

no audio artifacts are introduced. This allows applications to

control this behavior. |

Note

Open Issue: volume seems like better as double, or even better as

double in dB. what value is it set at if one wants it half as loud?

15. Change Log

This section will be removed before publication.

Changes since February 18, 2014

- Bug 24928: Remove MediaStream state check from addTrack() algorithm.

- Bug 24930: Remove MediaStream state check from the removeTrack()

algorithm.

- Added native settings to tracks.

- Removed videoMediaStreamTrack and audioMediaStreamTrack

since they are no longer necessary.

Changes since December 25, 2013

- Make optional constraints a list of ConstraintSets. Make

ConstraintSet an object.

- Remove noaccess, move peerIdentity

- Add constraints for sampleRate, sampleSize, and echoCancelation.

- Aligned text in remainder of document with Constrainable changes.

- Removed statements that constraints are not applied to read-only sources

Changes since November 5, 2013

- ACTION-25: Switch mediastream.inactive to mediastream.active.

- ACTION-26: Rewrite stop to only detach the track's source.

- Bug 22338: Arbitrary changing of tracks.

- Bug 23125: Use double rather than float.

- Bug 22712: VideoFacingMode enum needs an illustration.

- Moved constraints into a separate Constrainable interface.

- Created a separate section on error handling.

Changes since October 17, 2013

- Bug 23263: Add output device enumeration to GetSources

- Introduced the Constrainable interface.

- Change consensus note on constraints in IANA section.

- Removed createObjectURL.

- Bug 22209: Should not use MUST requirements on values provided by the

developer.

Changes since August 24, 2013

- Bug 22269: Renamed getSourceInfos() to getSources() and made the

result async.

- Bug 22229: Editorial input

- Bug 22243: Clarify readonly track

- Bug 22259: Disabled mediastreamtrack and state of media element

- Bug 22226: Remove check of same source from MediaStream constructor

algorithm

- Replaced ended with inactive for MediaStream (resolves bug

21618).

- Bug 22264: MediaStream.ended set to true on creation

- Bug 22272: Permission revocation via MediaStreamTrack.stop()

- Bug 22248: Relationship between MediaStreamTrack and HTML5

VideoTrack/AudioTrack after MediaStream assignment

- Bug 22247: Setting loop attribute on a media element reading from a

MediaStream

Changes since July 4, 2013

- Bug 21967: Added paragraph on MediaStreamTrack enabled state and

updated cloning algorithm.

- Bug 22210: Make getUserMedia() algorithm use all numbered items.

- Bug 22250: Fixed accidentally overridden error.

- Bug 22211: Added async error when no valid media type is

requested.

- Bug 22216: Made NavigatorUserMediaError extend DOMError.

- Bug 22249: Throw on attempts to set currentTime on media elements

playing MediaStream objects.

- Bug 22246: Made media.buffered have length 0.

- Bug 22692: Updated media element to use HAVE_NOTHING state before

media arrives on the played MediaStream and HAVE_ENOUGH_DATA as soon as

media arrives.

May 29, 2013

- Bug 22252: fixed usage of MUST in MediaStream() constructor

description.

- Bug 22215: made MediaStream.ended readonly.

- Bug 21967: clarified MediaStreamTrack.enabled state initial

value.

- Added aspectRatio constraint, capability, and state.

- Updated usage of MediaStreams in media elements.

May 15, 2013

- Added explanatory section for constraints, capabilities, and

states.

- Added VideoFacingModeEnum (including left and right options).

- Added getSourceInfos() and SourceInfo dictionary.

- Added isolated streams.

April 29, 2013

- Removed remaining photo APIs and references (since we have a separate

Image Capture Spec).

March 20, 2013

- Added readonly and remote attributes to MediaStreamTrack

- Removed getConstraint(), setConstraint(), appendConstraint(), and

prependConstraint().

- Added source states. Added states() method on tracks. Moved

sourceType and sourceId to be states.

- Added source capabilities. Added capabilities() method on

tracks.

- Added clarifying text about MediaStreamTrack lifecycle and

mediaflow.

- Made MediaStreamTrack cloning explicit.

- Removed takePhoto() and friends from VideoStreamTrack (we have a

separate Image Capture Spec).

- Made getUserMedia() error callback mandatory.

December 12, 2012

- Changed error code to be string instead of number.

- Added core of settings proposal allowing for constraint changes after

stream/track creation.

November 15 2012

- Introduced new representation of tracks in a stream (removed

MediaStreamTrackList).

- Updated MediaStreamTrack.readyState to use an enum type (instad of

unsigned short constants).

- Renamed MediaStream.label to MediaStream.id (the definition needs

some more work).

October 1 2012

- Limited the track kind values to "audio" and "video" only (could

previously be user defined as well).

- Made MediaStream extend EventTarget.

- Simplified the MediaStream constructor.

June 23 2012

- Rename title to "Media Capture and Streams".

- Update document to comply with HTML5.

- Update image describing a MediaStream.

- Add known issues and various other editorial changes.

June 22 2012

- Update wording for constraints algorithm.

June 19 2012

- Added "Media Streams as Media Elements section".

June 12 2012

- Switch to respec v3.

June 5 2012

- Added non-normative section "Implementation Suggestions".

- Removed stray whitespace.

June 1 2012

- Added media constraint algorithm.

Apr 23 2012

- Remove MediaStreamRecorder.

Apr 20 2012

- Add definitions of MediaStreams and related objects.

Dec 21 2011

- Changed to make wanted media opt in (rather than opt out). Minor

edits.

Nov 29 2011

- Changed examples to use MediaStreamOptions objects rather than

strings. Minor edits.

Nov 15 2011

- Removed MediaStream stuff. Refers to webrtc 1.0 spec for that part

instead.

Nov 9 2011

- Created first version by copying the webrtc spec and ripping out

stuff. Put it on github.

A. Acknowledgements

The editors wish to thank the Working Group chairs and Team Contact,

Harald Alvestrand, Stefan Håkansson and Dominique Hazaël-Massieux, for

their support. Substantial text in this specification was provided by many

people including

Jim Barnett,

Harald Alvestrand,

Travis Leithead,

and Stefan Håkansson.