Media Capture and Streams

W3C Editor's Draft 20 March 2013

- This version:

- http://dev.w3.org/2011/webrtc/editor/archives/20130320/getusermedia.html

- Latest published version:

- http://www.w3.org/TR/mediacapture-streams/

- Latest editor's draft:

- http://dev.w3.org/2011/webrtc/editor/getusermedia.html

- Previous editor's draft:

- http://dev.w3.org/2011/webrtc/editor/archives/20121212/getusermedia.html

- Editors:

- Daniel C. Burnett, Voxeo

- Adam Bergkvist, Ericsson

- Cullen Jennings, Cisco

- Anant Narayanan, Mozilla (until November 2012)

Initial Author of this Specification was Ian Hickson, Google Inc., with the following copyright statement:

© Copyright 2004-2011 Apple Computer, Inc., Mozilla Foundation, and Opera Software ASA. You are granted a license to use, reproduce and create derivative works of this document.

All subsequent changes since 26 July 2011 done by the W3C WebRTC Working Group and the Device APIs Working Group are under the following Copyright:

© 2011-2013 W3C® (MIT, ERCIM, Keio, Beihang), All Rights Reserved. Document use rules apply.

For the entire publication on the W3C site the liability and trademark rules apply.

Status of This Document

This section describes the status of this document at the time of its publication. Other

documents may supersede this document. A list of current W3C publications and the latest revision

of this technical report can be found in the W3C technical reports

index at http://www.w3.org/TR/.

This document is not complete. It is subject to major changes and, while

early experimentations are encouraged, it is therefore not intended for

implementation. The API is based on preliminary work done in the WHATWG.

The Media Capture Task Force expects this specification to evolve

significantly based on:

- Privacy issues that arise when exposing local capabilities and local

streams.

- Technical discussions within the task force.

- Experience gained through early experimentations.

- Feedback received from other groups and individuals.

This document was published by the Web Real-Time Communication Working Group and Device APIs Working Group as an Editor's Draft.

If you wish to make comments regarding this document, please send them to

public-media-capture@w3.org

(subscribe,

archives).

All comments are welcome.

Publication as an Editor's Draft does not imply endorsement by the W3C Membership.

This is a draft document and may be updated, replaced or obsoleted by other documents at

any time. It is inappropriate to cite this document as other than work in progress.

This document was produced by a group operating under the

5 February 2004 W3C Patent Policy.

W3C maintains a public list of any patent disclosures (Web Real-Time Communication Working Group, Device APIs Working Group)

made in connection with the deliverables of the group; that page also includes instructions for

disclosing a patent. An individual who has actual knowledge of a patent which the individual believes contains

Essential Claim(s) must disclose the

information in accordance with section

6 of the W3C Patent Policy.

3. Terminology

The

EventHandler interface represents a callback used for event

handlers as defined in [HTML5].

The concepts

queue a task and

fires a simple event are defined in [HTML5].

The terms

event handlers and

event handler event types are defined in [HTML5].

Note

The following paragraphs need to be spread out

to make them easier to read. Also, descriptions for "source

states" and "capabilities" need to be added.

A source is the "thing" providing the source of a

media stream track. The source is the broadcaster of the media

itself. A source can be a physical webcam, microphone, local

video or audio file from the user's hard drive, network

resource, or static image. Some sources have an identifier

which must be unique to

the application (un-guessable by another application) and

persistent between application sessions (e.g., the identifier

for a given source device/application must stay the same, but

not be guessable by another application). Sources that must have

an identifier are camera and microphone sources; local file

sources are not required to have an identifier. Source

identifiers let the application save, identify the availability

of, and directly request specific sources. Other than the

identifier, other bits of source identify

are never directly available to the application

until the user agent connects a source to a track. Once a source

has been "released" to the application (either via a permissions

UI, pre-configured allow-list, or some other release mechanism)

the application will be able discover additional source-specific

capabilities. When a source is connected to a track, it must

conform to the constraints present on that track (or set of

tracks). Sources will be released (un-attached) from a track

when the track is ended for any reason. On

the MediaStreamTracksourceType attribute. The

behavior of APIs associated with the source's capabilities and

state change depending on the source type. [[Sources

have capabilities

and state. The capabilities and state are

"owned" by the source and are common to any [multiple] tracks

that happen to be using the same source (e.g., if two different

tracks objects bound to the same source ask for the same

capability or state information, they will get back the same

answer).]]

Constraints are an optional feature

for restricting the range of allowed variability on a

source. Without provided constraints, implementations are free

to select a source's state from the full range of its supported

capabilities, and to adjust that state at any time for any

reason. Constraints may be optional or mandatory. Optional

constraints are represented by an ordered list, mandatory

constraints are an unordered set. The order of the optional

constraints is from most important (at the head of the list) to

least important (at the tail of the list). Constraints are

stored on the track object, not the source. Each track can be

optionally initialized with constraints, or constraints can be

added afterward through the constraint APIs defined in this

spec. Applying track level constraints to a source is

conditional based on the type of source. For example, read-only

sources will ignore any specified constraints on the track. It

is possible for two tracks that share a unique source to apply

contradictory constraints. Under such contradictions, the

implementation will mute both tracks and notify them that they

are over-constrained. Events are available that allow the

application to know when constraints cannot be met by the user

agent. These typically occur when the application applies

constraints beyond the capability of a source, contradictory

constraints, or in some cases when a source cannot sustain

itself in over-constrained scenarios (overheating, etc.).

Constraints that are intended for video sources will be ignored

by audio sources and vice-versa. Similarly, constraints that are

not recognized will be preserved in the constraint structure,

but ignored by the application. This will allow future

constraints to be defined in a backward compatible manner. A

correspondingly-named constraint exists for each corresponding

source state name and capability name. In general, user agents

will have more flexibility to optimize the media streaming

experience the fewer constraints are applied.

4. Stream API

4.1 Introduction

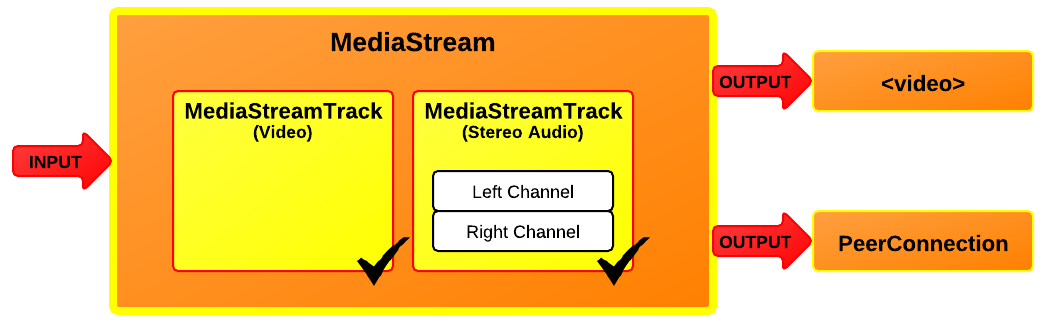

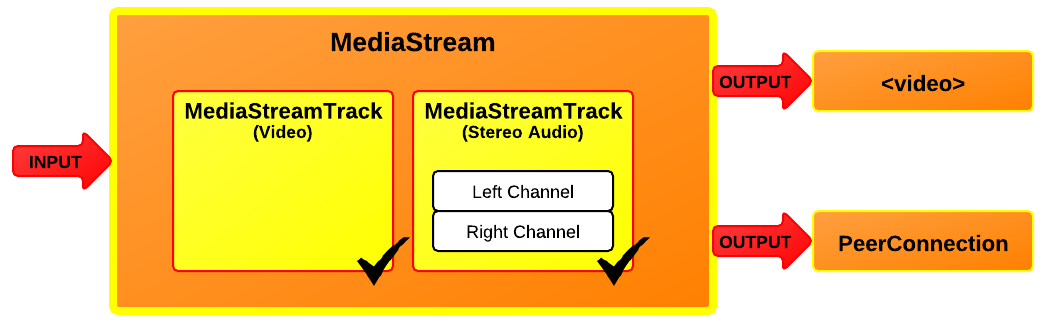

The MediaStreamMediaStream

Each MediaStream

Each track in a MediaStream object has a corresponding

MediaStreamTrack

A MediaStreamTrack

A channel is the smallest unit considered in this API

specification.

A MediaStreamMediaStreamgetUserMedia() call (which is

described later in this document), for instance, might take its input

from the user’s local camera. The output of the object controls how the

object is used, e.g., what is saved if the object is written to a file or

what is displayed if the object is used in a video

element.

Each track in a MediaStream

A MediaStream

The output of a MediaStream

A new MediaStreamMediaStream()

constructor. The constructor argument can either be an existing

MediaStreamMediaStreamTrack

The ability to duplicate a MediaStreamMediaStreamMediaStream

When a MediaStreamMediaStream object is also used in contexts outside

getUserMedia, such as [WEBRTC10]. In both cases, ensuring

a realtime stream reduces the ease with which pages can distinguish live

video from pre-recorded video, which can help protect the user’s

privacy.

4.3 MediaStreamTrack

A MediaStreamTrackMediaStreamTrackgetUserMedia()

.

Note that a web application can revoke all given permissions

with MediaStreamTrack.stop().

A MediaStreamTrack

When a MediaStreamTrackstop() method being invoked on the

MediaStreamTrack

-

If the track’s readyState attribute

has the value ended already, then abort these steps.

-

Set track’s readyState attribute to

ended.

-

Fire a simple event named ended at the object.

If the end of the stream was reached due to a user request, the event

source for this event is the user interaction event source.

Constraints are independent of sources. **** Add note about

potential effects of readonly and remote flags ****

Whether MediaTrackConstraints

Each track maintains an internal version of

the MediaTrackConstraintsMediaTrackConstraintsMediaTrackConstraintSet, MediaTrackConstraint,

and similarly-derived-type dictionary objects.

When track constraints change, a user agent must queue a task to evaluate those changes

when the task queue is next serviced. Similarly, if

the sourceType

changes, then the user agent should perform the same actions to

re-evaluate the constraints of each track affected by that

source change.

interface MediaStreamTrack {

readonly attribute DOMString kind;

readonly attribute DOMString id;

readonly attribute DOMString label;

attribute boolean enabled;

readonly attribute MediaStreamTrackState readyState;

readonly attribute SourceTypeEnum sourceType;

readonly attribute DOMString sourceId;

attribute EventHandler onstarted;

attribute EventHandler onmute;

attribute EventHandler onunmute;

attribute EventHandler onended;

any getConstraint (DOMString constraintName, optional boolean mandatory = false);

void setConstraint (DOMString constraintName, any constraintValue, optional boolean mandatory = false);

MediaTrackConstraints? constraints ();

void applyConstraints (MediaTrackConstraints constraints);

void prependConstraint (DOMString constraintName, any constraintValue);

void appendConstraint (DOMString constraintName, any constraintValue);

attribute EventHandler onoverconstrained;

void stop ();

};4.3.1 Attributes

enabled of type boolean, -

The MediaStreamTrack.enabled

attribute, on getting, MUST return the last value to which it was

set. On setting, it MUST be set to the new value, and then, if the

MediaStreamTrack

Note

Thus, after a MediaStreamTrackenabled attribute still

changes value when set; it just doesn’t do anything with that new

value.

id of type DOMString, readonly -

Unless a MediaStreamTrackid attribute to that string.

An example of an algorithm that specifies how the track id must be

initialized is the algorithm to represent an incoming network

component with a MediaStreamTrack

MediaStreamTrack.id attribute MUST return the value

to which it was initialized when the object was created.

kind of type DOMString, readonly -

The MediaStreamTrack.kind

attribute MUST return the string "audio" if the object

represents an audio track or "video" if object represents

a video track.

label of type DOMString, readonly -

User agents MAY label audio and video sources (e.g., "Internal

microphone" or "External USB Webcam"). The MediaStreamTrack.label

attribute MUST return the label of the object’s corresponding track,

if any. If the corresponding track has or had no label, the attribute

MUST instead return the empty string.

Note

Thus the kind and label attributes do not

change value, even if the MediaStreamTrack

onended of type EventHandler, - This event handler, of type

ended, MUST be supported by

all objects implementing the MediaStreamTrack onmute of type EventHandler, - This event handler, of type

muted, MUST be supported by

all objects implementing the MediaStreamTrack onoverconstrained of type EventHandler, - This event handler, of type

overconstrained, MUST be supported by

all objects implementing the MediaStreamTrack onstarted of type EventHandler, - This event handler, of type

started, MUST be supported by

all objects implementing the MediaStreamTrack onunmute of type EventHandler, - This event handler, of type

unmuted, MUST be supported

by all objects implementing the MediaStreamTrack readyState of type MediaStreamTrackState, readonly -

The readyState

attribute represents the state of the track. It MUST return the value

to which the user agent last set it.

When a MediaStreamTrackgetUserMedia(), its

readyState is either

live or muted, depending on the state of

the track's underlying media source. For example, a track in a

MediaStreamgetUserMedia() MUST

initially have its readyState attribute

set to live.

sourceId of type DOMString, readonly - The application-unique identifier for this source. The

same identifier must be valid between sessions of this

application, but must also be different for other

applications. Some sort of GUID is recommended for the

identifier.

sourceType of type SourceTypeEnum, readonly - Returns the type information associated with the currently

attached source (if any).

4.3.2 Methods

appendConstraint-

Appends (at the end of the list) the provided constraint

name and value. This method does not consider whether a

same-named constraint already exists in the optional

constraints list.

This method applies exclusively to optional constraints;

it does not modify mandatory constraints.

This method is a convenience API for programmatically

building constraint structures.

| Parameter | Type | Nullable | Optional | Description |

|---|

| constraintName | DOMString | ✘ | ✘ | The name of the constraint to

append to the list of optional constraints. |

| constraintValue | any | ✘ | ✘ | Either a primitive value

(float/DOMString/etc), or a MinMaxConstraint

dictionary. |

applyConstraints-

This API will replace all existing constraints with the

provided constraints (if existing constraints

exist). Otherwise, it will apply the newly provided

constraints to the track.

| Parameter | Type | Nullable | Optional | Description |

|---|

| constraints | MediaTrackConstraints | ✘ | ✘ | A new constraint structure to apply

to this track. |

constraints-

Returns the complete constraints object associated with the

track. If no mandatory constraints have been defined,

the

mandatory field will not be present (it will be

undefined). If no optional constraints have been defined,

the optional field will not be present (it will be

undefined). If neither optional, nor mandatory constraints have been

created, the value null is returned.

No parameters.

getConstraint-

Retrieves a specific named constraint value from the

track. The named constraints are the same names used for

the capabilities API, and also are

the same names used for the source's state attributes.

Returns one of the following types:

- null

- If no constraint matching the provided constraintName

exists in the respective optional or mandatory set on this

track.

- sequence<MediaTrackConstraint>

-

If the mandatory flag is false and there is at least

one optional matching constraint name defined on this

track.

Each MediaTrackConstraint result in the list will

contain a key which matches the

requested constraintName parameter, and whose

value will either be a primitive value, or

a MinMaxConstraint

object.

The returned list will be ordered from most

important-to-satisfy at index 0, to the

least-important-to-satisfy optional constraint.

Note

Example: Given a track with an

internal constraint structure:

{

mandatory: {

width: { min: 640 },

height: { min: 480 }

},

optional: [

{ width: 650 },

{ width: { min: 650 }},

{ frameRate: 60 },

{ width: { max: 800 }},

{ fillLightMode: "off" },

{ facingMode: "user" }

]

}

and a request for getConstraint("width"), the following list would be returned:

[

{ width: 650 },

{ width: { min: 650 }}

{ width: { max: 800 }}

]

- MinMaxConstraint

- If the mandatory flag is true, and the requested

constraint is defined in the

mandatory

MediaTrackConstraintSet associated

with this track, and the value of the constraint is a

min/max range object.

- primitive_value

- If the mandatory flag is true, and the requested

constraint is defined in the

mandatory

MediaTrackConstraintSet associated

with this track, and the value of the constraint is a

primitive value (DOMString, unsigned long, float,

etc.).

| Parameter | Type | Nullable | Optional | Description |

|---|

| constraintName | DOMString | ✘ | ✘ | The name of the setting for which the current value of that setting should be returned |

| mandatory | boolean = false | ✘ | ✔ | true to indicate that

the constraint should be looked up in the mandatory set

of constraints, otherwise, the constraintName should be

retrieved from the optional list of constraints. |

prependConstraint-

Prepends (inserts before the start of the list) the

provided constraint name and value. This method does not

consider whether a same-named constraint already exists in

the optional constraints list.

This method applies exclusively to optional constraints;

it does not modify mandatory constraints.

This method is a convenience API for programmatically

building constraint structures.

| Parameter | Type | Nullable | Optional | Description |

|---|

| constraintName | DOMString | ✘ | ✘ | The name of the constraint to

prepend to the list of optional constraints. |

| constraintValue | any | ✘ | ✘ | Either a primitive value

(float/DOMString/etc), or a MinMaxConstraint

dictionary. |

setConstraint-

This method updates the value of a same-named existing

constraint (if found) in either the mandatory or optional

list, and otherwise sets the new constraint.

This method searches the list of optional constraints

from index 0 (highest priority) to the end of

the list (lowest priority) looking for matching

constraints. Therefore, for multiple same-named optional

constraints, this method will only update the value of the

highest-priority matching constraint.

If the mandatory flag is false

and the constraint is not found in the list of optional

constraints, then a new optional constraint is created and

appended to the end of the list (thus having lowest

priority).

Note

Note: This behavior allows applications to iteratively call setConstraint and have their constraints added in the order specified in the source.

| Parameter | Type | Nullable | Optional | Description |

|---|

| constraintName | DOMString | ✘ | ✘ | The name of the constraint to set. |

| constraintValue | any | ✘ | ✘ | Either a primitive value

(float/DOMString/etc), or a MinMaxConstraint

dictionary. |

| mandatory | boolean = false | ✘ | ✔ | A flag indicating whether this constraint should be applied to the optional or mandatory constraints. |

stop-

When a MediaStreamTrackstop() method

is invoked, if no source is attached

(e.g., sourceType is "none"), then this

call returns immediately (e.g., is a no-op). Otherwise, the

user agent MUST queue a task that runs the following steps:

-

Let track be the current

MediaStreamTrack

-

End track. The track starts

outputting only silence and/or blackness, as appropriate.

-

Permanently stop the generation of data for track's

source. If the data is being generated from a live source (e.g.,

a microphone or camera), then the user agent SHOULD remove any

active "on-air" indicator for that source. If the data is being

generated from a prerecorded source (e.g. a video file), any

remaining content in the file is ignored.

The task source for the tasks

queued for the stop() method is the DOM

manipulation task source.

No parameters.

enum MediaStreamTrackState {

"new",

"live",

"muted",

"ended"

};| Enumeration description |

|---|

new | The track type is new and has not been initialized

(connected to a source of any kind). This state implies that

the track's label will be the empty string. |

live |

The track is active (the track’s underlying media source is making

a best-effort attempt to provide data in real time).

The output of a track in the live state can be

switched on and off with the enabled attribute.

|

muted |

The track is muted (the track’s underlying media source is

temporarily unable to provide data).

A MediaStreamTrackMediaStream

Also, the "muted" state can be entered when a track becomes over-constrained.

|

ended |

The track has ended (the track's underlying media source is

no longer providing data, and will never provide more data for this

track). Once a track enters this state, it never exits it.

For example, a video track in a

MediaStream

|

4.3.3 Track Source Types

enum SourceTypeEnum {

"none",

"camera",

"microphone",

"photo-camera"

};| Enumeration description |

|---|

none | This track has no source. This is the case when the track is in the "new" or "ended" readyState. |

camera | A valid source type only for VideoStreamTrack |

microphone | A valid source type only for AudioStreamTrack |

photo-camera | A valid source type only for VideoStreamTrackstate attributes. |

4.4 Video and Audio Tracks

The MediaStreamTrackMediaStreamTrack

Note that the camera's green light

doesn't come on

when a new track is created; nor does the user get prompted to

enable the camera/microphone. Those actions only happen after

the developer has requested that a media stream

containing "new" tracks be bound to a source

via getUserMedia(). Until that point tracks

are inert.

4.4.1 VideoStreamTrack interface

Video tracks may be instantiated with optional media track

constraints. These constraints can be later modified on the

track as needed by the application, or created after-the-fact

if the initial constraints are unknown to the application.

Note

Example: VideoStreamTrack

new VideoStreamTrack();

or

new VideoStreamTrack( { optional: [ { sourceId: "20983-20o198-109283-098-09812" }, { width: { min: 800, max: 1200 }}, { height: { min: 600 }}] });

[Constructor(optional MediaTrackConstraints videoConstraints)]

interface VideoStreamTrack : MediaStreamTrack {

static sequence<DOMString> getSourceIds ();

void takePhoto ();

attribute EventHandler onphoto;

attribute EventHandler onphotoerror;

};

4.4.1.1 Attributes

onphoto of type EventHandler, - Register/unregister for "photo" events. The handler should expect to get a BlobEvent object as its first parameter.

Note

The BlobEvent returns a photo (as a Blob) in a

compressed format (for example: PNG/JPEG) rather than a

raw ImageData object due to the expected large,

uncompressed size of the resulting photos.

onphotoerror of type EventHandler, - In the event of an error taking the photo, a

"photoerror" event will be dispatched instead of a "photo"

event. The "photoerror" is a simple event of type

Event.

4.4.1.2 Methods

getSourceIds, static-

Returns an array of application-unique source

identifiers. This list will be populated only with local

sources whose sourceType

is "camera" or "photo-camera"

[and if allowed by the user-agent, "readonly"

variants of the former two types]. The video source ids

returned in the list constitute those sources that the

user agent can identify at the time the API is called (the

list can grow/shrink over time as sources may be added or

removed). As a static

method, getSourceIds can be queried

without instantiating

any VideoStreamTrackgetUserMedia().

Issue 1

Issue: This information

deliberately adds to the fingerprinting surface of the

UA. However, this information will not be identifiable

outside the scope of this application and could also be

obtained via other round-about techniques

using getUserMedia().

No parameters.

takePhoto-

If the sourceType's value is

anything other than "photo-camera", this

method returns immediately and does nothing. If

the sourceType

is "photo-camera", then this method

temporarily (asynchronously) switches the source into

"high resolution photo mode", applies the

configured photoWidth, photoHeight, exposureMode,

and isoMode state to the stream, and

records/encodes an image (using a user-agent determined

format) into a Blob object. Finally, a task

is queued to fire a "photo" event with the resulting

recorded/encoded data. In case of a failure for any

reason, a "photoerror" event is queued instead and no

"photo" event is dispatched.

Issue 2

Issue: We could consider providing a hint or setting for the desired photo format? There could be some alignment opportunity with the Recoding proposal...

No parameters.

4.4.3 AudioStreamTrack

Note

Example: AudioStreamTrack

new AudioStreamTrack();

or

new AudioStreamTrack( { optional: [ { sourceId: "64815-wi3c89-1839dk-x82-392aa" }, { gain: 0.5 }] });

[Constructor(optional MediaTrackConstraints audioConstraints)]

interface AudioStreamTrack : MediaStreamTrack {

static sequence<DOMString> getSourceIds ();

};

4.4.3.1 Methods

getSourceIds, static- See definition of

getSourceIds on

the VideoStreamTrackAudioStreamTracksourceType

is "microphone" [and if allowed by the

user-agent, "readonly" microphone

variants].No parameters.

8. Obtaining local multimedia content